Chapter 4 Reciprocity and cooperation

4.1 In a nutshell

Laboratory experiments have shown that people behave differently than what the rational choice theory predicts whenever there are third parties (other players or experimenters). These deviations all go in the same direction: choices that are more prosocial than the prediction, at a cost for the individual.

This makes sense from a cultural evolutionary perspective: in tight-knit human societies, some degree of prosocial behaviour is adaptive. Indeed, humans seem to be particularly efficient at cognitive tasks of social cognition, such as detecting cheaters or managing our own reputation. Interventions leveraging social cognition can thus be extremely powerful, be they to correct norms perception to change social norms.

4.3 Cooperation the lab

A large body of literature from experimental economics documents how, in the very abstract setting of the lab, people predictably deviate from the predictions of rational choice when they think they are interacting with another person. Most of these experiments are variations of a small set of games. I think you need to be familiar with them since they are widely used to model policy issues such as carbon footprint reduction (a prisoners’ dilemma).

4.3.1 A basic grammar of games

This section provides a very quick introduction to the most frequently used games, and how their results commonly diverge from rational choice theory predictions.

In the Dictator game (description), you get a set amount of money, say €10, which you have to split with another player, whom you’ll never know and will never know you. The only rational equilibrium of this game is to keep the whole sum, since giving some of this away provides you with no benefit. In practice, the modal offers are in the 20%-30% range, and only 1/5 of typical participants offer 0.27 The setup being very easy to explain and reproduce, this game has been widely replicated outside WEIRD samples, with consistent results.

In the Ultimatum game (description), the first player offers a split to the second player, who can either accept or refuse it. If the second player refuses, both players get nothing. Again, the Nash equilibrium is to offer 0, or the closest possible amount to zero, since the second players will be better off with something rather than nothing. In actual experiments, the modal offer is around 50%, and offers below 30% are often rejected, with a rejection rate over 1/2 for the few offers lower than 20%.

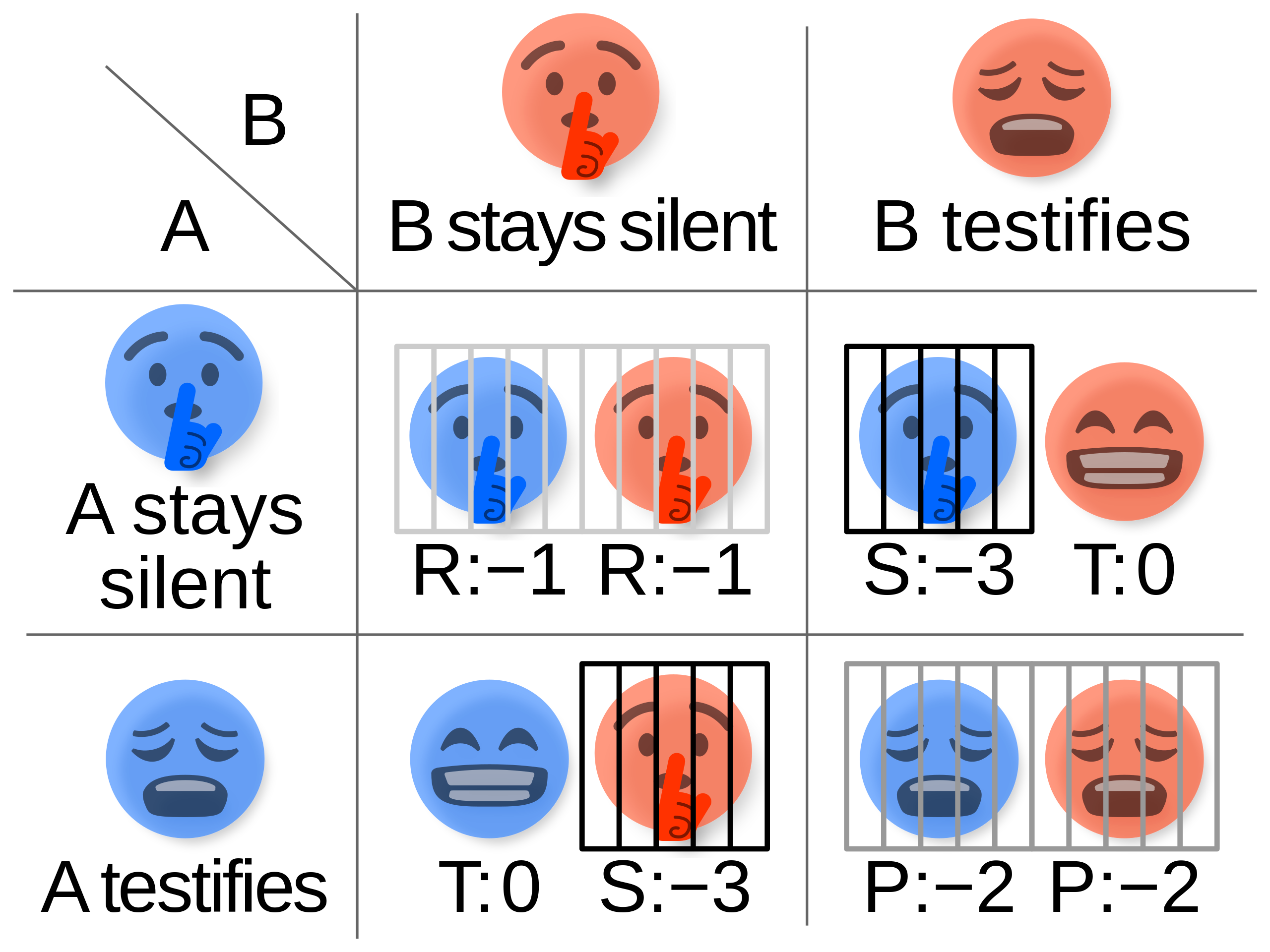

Figure 4.3: Sample payoffs matrix for the Prisonner’s dilemna

Figure 4.3: Sample payoffs matrix for the Prisonner’s dilemna

In the Prisoner’s dilemma (description), each of the two players have to choose either to cooperate (stay silent) or to deviate (testify). If both cooperate, they get a light sentence. If both testify, they both get a medium sentence. If only one testifies, that player gets away while the other gets a hard sentence. This game is set up in a way that the only rational equilibrium is to testify, leading to the worst outcome for both players.

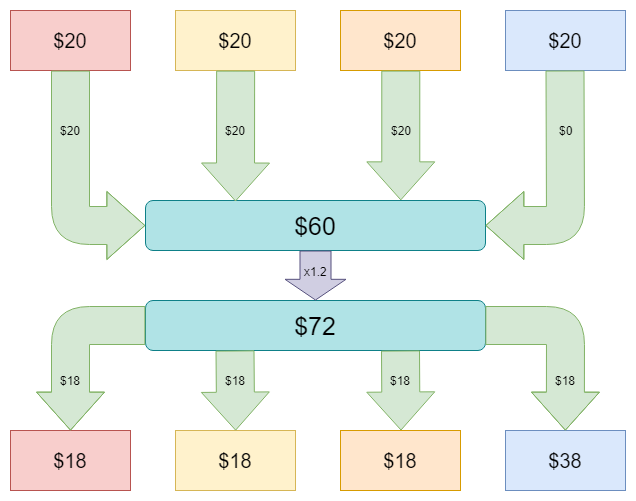

In a Public good game (description), players get an initial endowment. They can either put any fraction of it in a public pot. The sum in the public pot is then multiplied by some factor, and split evenly between all players. In the example illustrated on the right, the multiplication factor is low, 1.2, making it worthwhile to invest in the public good only under an expectation that other players will also invest at least 80% of their endowment. In practice, the mean contribution falls most of the time in the 40%-60% range, but the underlying distribution is highly bimodal: most people invest either all or none of their endowment.

Figure 4.4: Sample public good game

Figure 4.4: Sample public good game

In each case above, the deviations from the expected rational equilibrium rely seem to rely on some form of expectation of reciprocity. In the dictator game, players interviewed about their motivations state a sense of fairness (more on this later). In the ultimatum game, they also refer to a perceived threat or retaliation against too low an offer, a form of negative reciprocity.28 In some tribal societies, people also reject offers deemed too high, because they generate too large an obligation from the recipient. Despite the abstract lab setting (and the fact that they actually often play against computers), people include in their assessment a dimension of repeated, social interaction, and built their decision on this context.

4.3.2 Let’s play again

What happens when people play not one, but several rounds in a row? In this repeated games setting, game theory prediction become murkier. The Folk Theorem, so called because it was deemed common knowledge when the need to make a formal reference to it arose, states that basically any reasonable equilibrium can emerge from a repeated game, provided players are rational and patient enough.

In practice, computer programs are a convenient way to generate large numbers interactions while specifying explicitly strategies and degrees of patience. A famous instance is (Axelrod and Hamilton 1981Axelrod, Robert, and William D. Hamilton. 1981. “The Evolution of Cooperation.” Science 211 (4489): 1390–96. https://doi.org/10.1126/science.7466396.), which showed that cooperation in a repeated prisoner’s dilemma can be sustained by a simple Tit for Tat strategy – actually, the strategy empirically dominates most, if not all, more sophisticated strategies. The demonstration of this dominance relied on an evolutionary setting: at each stage, each individual is paired with another one, an plays a prisoner’s dilemma. A share of individuals with the lower gains (e.g. the lowest 10% gains) is removed, and replaced by a population representative of the higher gains populations.

This is of course a kind of worst-case scenario: what the experiment shows is that even when payoffs are designed to discourage cooperation and you have no information about other people’s strategies, cooperative behaviours can be sustained by a very simple punishment heuristic. Starting from this insight, it is straightforward to see that sustaining cooperation would be easier with more information about other people, such as the share of each population or, even better, an hint about the strategy of the person you deal with. A core issue of sustainable cooperation is thus the ability to reliably identify cooperators and to avoid defectors.

4.3.3 Trust and spotting defectors

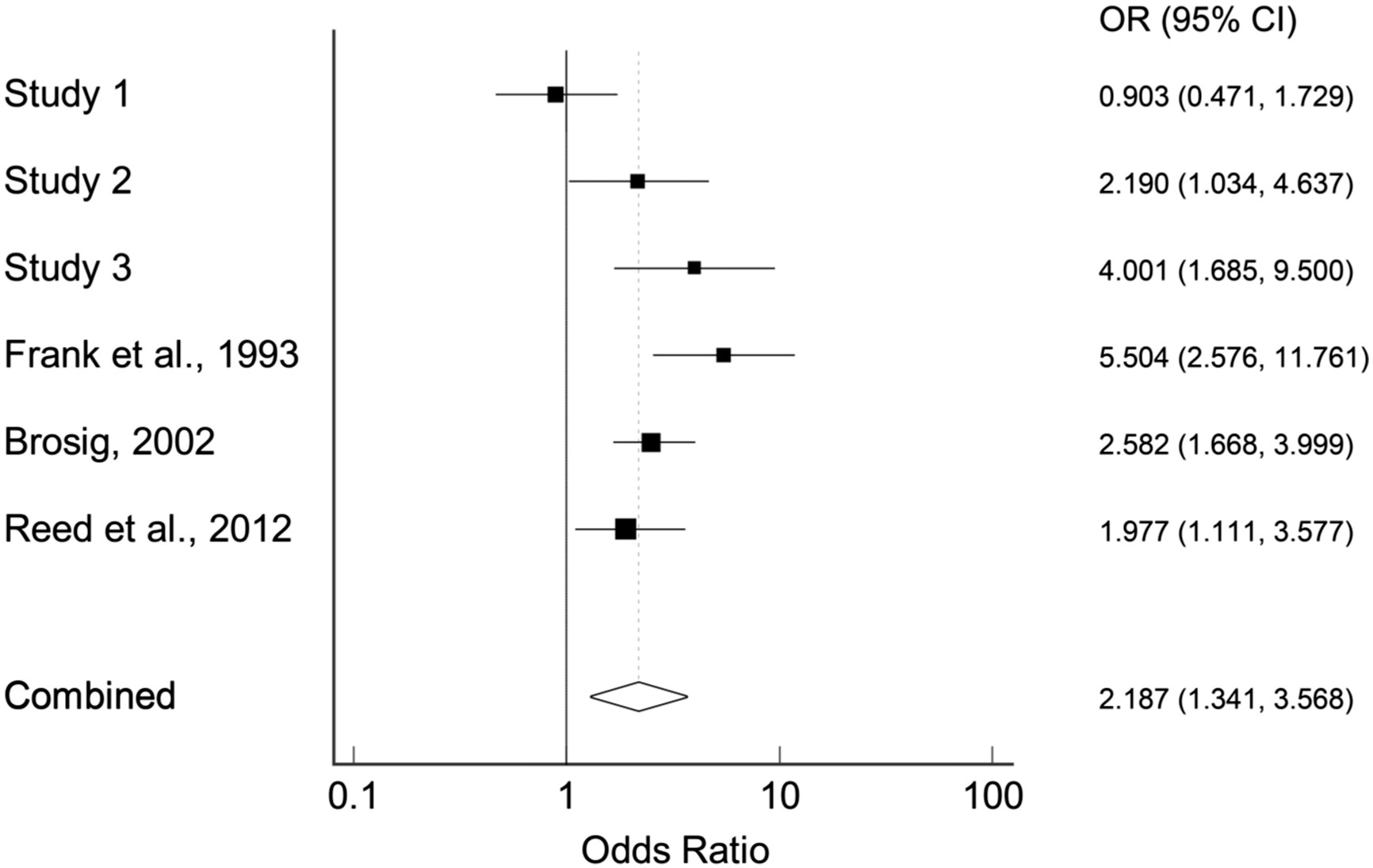

It turns out we seem to be well equipped for spotting non-cooperative behaviours. (Sparks et al. 2016Sparks, Adam, Tyler Burleigh, and Pat Barclay. 2016. “We Can See Inside: Accurate Prediction of Prisoner’s Dilemma Decisions in Announced Games Following a Face-to-Face Interaction.” Evolution and Human Behavior 37 (3): 210–16. https://doi.org/10.1016/j.evolhumbehav.2015.11.003.) reviews a set of experiments, where unacquainted participants interact in groups of 3-4 people for 10mn to half an hour. They are hen asked to predict how each person they’ve interacted with would play in a prisoner’s dilemma. They then play the actual game. In each experiment, participants made a better-than-chance guess about other participants’ behaviour. This means that even a short, informal interaction with someone we do not know provides us with some signal about this person’s cooperative tendencies. Other experiments showed that some such information could be garnered from mute 20s videos, meaning that we are actually very quick at picking up whatever body hints we use for that.

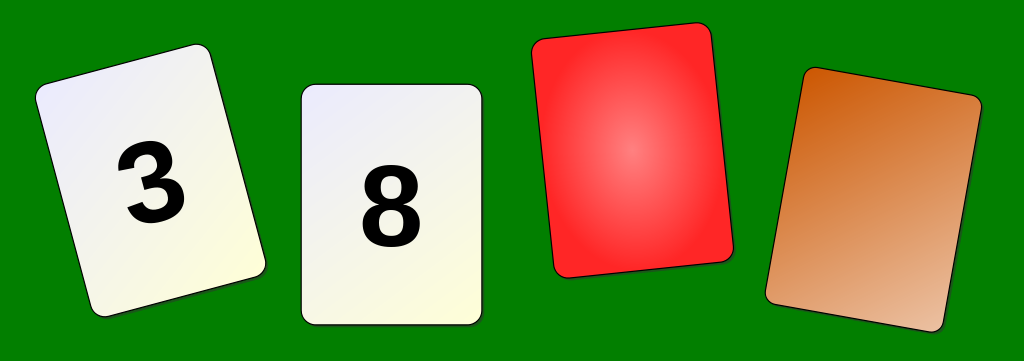

Figure 4.5: Wason’s Task, Life of Riley, CC BY-SA 4.0

Figure 4.5: Wason’s Task, Life of Riley, CC BY-SA 4.0

Actually, defector identification seems to be deeply engrained in our cognitive processes. A striking example is the difference in performance on Wason’s task depending on how it is framed, in (Griggs and Cox 1982Griggs, Richard A., and James R. Cox. 1982. “The Elusive Thematic-Materials Effect in Wason’s Selection Task.” British Journal of Psychology 73 (3): 407–20. https://doi.org/10.1111/j.2044-8295.1982.tb01823.x.). In he standard setting, you are showed four cards, two with numbers and two with a coloured back. You are told that the rule is as follows:

If a card has an even number, its back must be red

Which cards should you turn to check that the rule is correct?

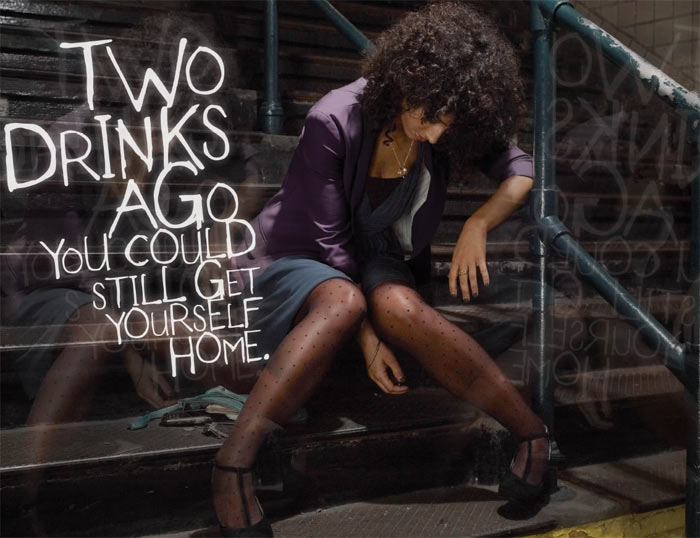

Figure 4.6: Wason’s Task drinking age variant, Life of Riley, CC BY-SA 4.0

Figure 4.6: Wason’s Task drinking age variant, Life of Riley, CC BY-SA 4.0

In the modified setting, cards are said to represent people, with their age on one side and the beverage they are having on the other. The rule is:

One must be at least 18 to have an alcoholic drink.

The experiment shows that people are much more likely to make the right choices (16 and beer) in the drinking rule framing than in the original setting (8 and orange). This can be attributed to a specialization in cheating detection in our cognitive processes.

4.4 Sustaining cooperation in the field: reputation

The main limitation of our ability to detect cheaters is that we observe only a tiny fraction of peoples’ actions, and even then we may misunderstand the motives or consequences of an action. As a result, we do not rely only, not even mostly, on direct observation: we pay a lot of attention to what we hear about other people, their reputation. Acquiring this information is often unconscious: a significant share of small talk can be framed as exchanging information about other peoples’ reputation. On the other hand, we consciously devote a lot of effort to building and maintaining a good reputation, on top of a host of unconscious reputation management behaviours. The practical consequence is that reputation is a very powerful tool for behavioural interventions.

4.4.1 What is reputation?

Reputation can be defined as the set of traits a person is believed to have by another person or group of persons. The belief dimension means that reputation can be true or false: a person may have a reputation of being hard-working because she pulls long hours at work, but with low productivity if the output is difficult to observe. Strictly speaking, reputation is about a specific trait, or a well-defined array of traits. In practice however, reputation about one trait tends to have spillover effects on reputation about other traits: we’ll expect a person with a reputation of honesty to be a good cooperator, for example. Of course, language is key in the whispers network which makes and breaks reputation.

Figure 4.7: Pop art style illsutration of word of mouth, closely depicting the matching French expression ‘bouche à oreille’.

Figure 4.7: Pop art style illsutration of word of mouth, closely depicting the matching French expression ‘bouche à oreille’.

Historical examples of explicit reputation management codes abound, from chivalric honour codes (sometimes sensitive to a fault, leading to fights), including up to the XIXth century. Someone as unlikely as Marcel Proust fought a pistol duel with a journalist, and genius mathematician Evariste Galois was thus killed at age 20, in 1832.

4.4.2 Unconscious reputation management

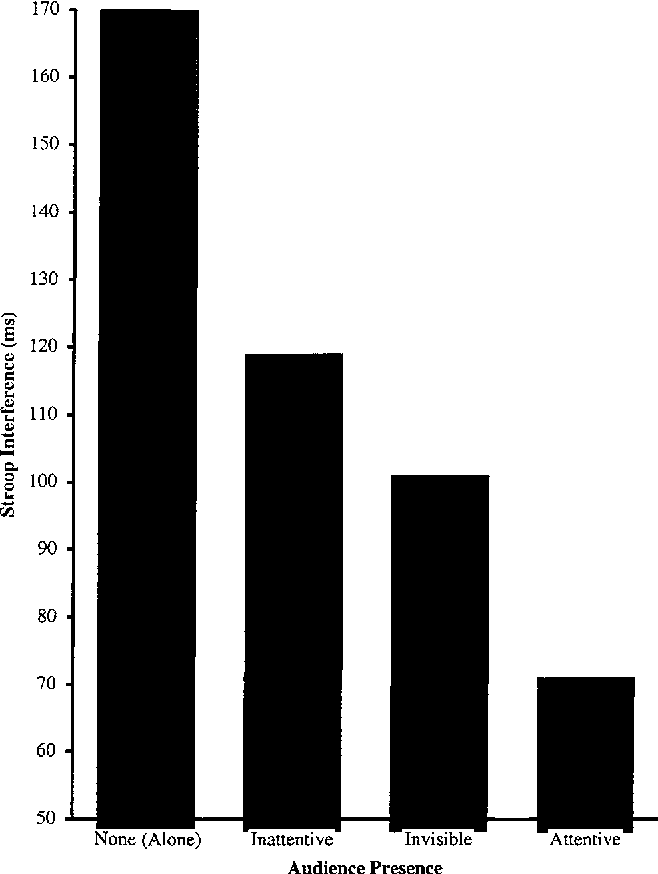

The fact we care about our reputation can be evidenced by experiments. One of these is (Huguet et al. 1999Huguet, P., M-P. Galvaing, J-M. Monteil, and F. Monteil. 1999. “Social Presence Effects in the Stroop Task: Further Evidence for an Attentional View of Social Facilitation.” Journal of Personality and Social Psychology 77 (5). https://doi.org/10.1037//0022-3514.77.5.1011.). This experiment relies on the commonplace two-stages Stroop task:

Figure 4.8: Sample card cor Stroop task stage 2

Figure 4.8: Sample card cor Stroop task stage 2

- First stage: words like “boat” are written in colour. Participants must note the colour of the word. This is cognitively demanding since you have to make abstraction of the word’s meaning.

- Second stage: the words are themselves colour names, written in another colour than the one they denote. Because of the dissonance between the meaning and colour, this task is even more demanding than the previous one.

- The experimenters monitor responses delay and error rate.

A large number of psychology studies rely on this task to measure cognitive performance in different settings. Prior to Huguet et al., 1999, the presence of absence of an experimenter in the room was deemed of little consequence. The team wanted to test that assumption by comparing four conditions:

- (Control) Subjects are alone in the room while performing the task.

- (Inattentive peer) Another person, actually an experimenter of the same age bracket as the subject, is present in the room, reading a book.

- (Invisible peer) Another person (same as above) is present, and sits behind the subject (but unable to see the computer screen).

- (Attentive peer) Another person (same as above) is present in front of the subject, and looking at them at least 60% of the time.

Figure 4.9: Main result of (Huguet et al. 1999)

Figure 4.9: Main result of (Huguet et al. 1999)

An important feature here is that in none of the three treatment conditions is the subject’s performance observable by the other person in the room. In the Inattentive peer condition, the experimenter reads a book, and in the Attentive peer condition, the experimenter only sees the back of the subject’s computer.

The performance in the task, measured by the amount of time needed to provide a correct answer, decreases from one condition to the next, showing that (1) we exert more effort when someone is present, and (2) we increase our effort when we feel this person may be paying attention — event if we rationally know that this person cannot observe the result of our effort. Thus, people behave differently — more according to the prevailing social norm — when they feel they are observed. In a sense, it is a generalized Hawthorne effect. A significant feature is that no actual observation need to take place.

Figure 4.10: Mosaic eyes in New York World Trade Center Metro station, August 2025. Own picture.

Figure 4.10: Mosaic eyes in New York World Trade Center Metro station, August 2025. Own picture.

In situ experiments, for example (Bateson et al. 2015Bateson, Melissa, Rebecca Robinson, Tim Abayomi-Cole, Josh Greenlees, Abby O’NAConnor, and Daniel Nettl. 2015. “Watching Eyes on Potential Litter Can Reduce Littering: Evidence from Two Field Experiments.” PeerJ 3 (December): e1443. https://doi.org/10.7717/peerj.1443.; Ernest-Jones et al. 2011Ernest-Jones, Max, Daniel Nettle, and Melissa Bateson. 2011. “Effects of Eye Images on Everyday Cooperative Behavior: A Field Experiment.” Evolution and Human Behavior 32 (3): 172–78. https://doi.org/10.1016/j.evolhumbehav.2010.10.006.), have shown that the mere picture of eyes reduces littering in a variety of environments. This has led to the application of human figures staring at passer-bys, or just eyes (for example in New York metro stations).

4.4.3 Conscious reputation management

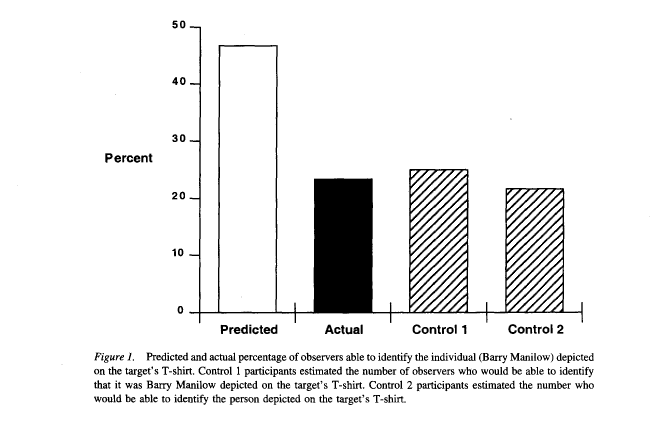

Figure 4.11: Barry Manilow

Figure 4.11: Barry Manilow

Our concern for our reputation is so important that we tend to over-estimate how much other people pay attention to our action — the Spotlight effect. An illustration is provided by (Gilovich et al. 2000Gilovich, Thomas, Victoria Husted Medvec, and Kenneth Savitsky. 2000. “The Spotlight Effect in Social Judgment: An Egocentric Bias in Estimates of the Salience of One’s Own Actions and Appearance.” Journal of Personality and Social Psychology (US) 78 (2): 211–22. https://doi.org/10.1037/0022-3514.78.2.211.), who asked students to walk around campus wearing a T-shirt with the picture of a singer generally considered as has-been (Barry Manilow).

Figure 4.12: Barry Manilow

These students were asked to estimate the percentage of people they met who would be able to identify the singer (and thus think that the student had questionable music tastes). Their guess, close to 50%, was twice the actual figure, which was correctly estimated by students not wearing the T-shirt.

4.8 Bottom line: like for any powerful tool, use with caution

Responses to social cognition cues are deeply embedded in our behaviours. We can rely on such cues to meaningfully change behaviours and norms guiding behaviours. This, of course, require a high degree of sensitivity to the pre-existing norms and representations.

4.9 Is cooperation as an innate trait?

Since prosocial behaviours are deeply embedded in the functioning of society, disentangling innate from acquired cooperation may seem a moot point from an applied public policy perspective. However, if cooperation is mostly learned, we’d expect cooperative behaviours to be contingent to the system of punishment and rewards sustaining this learning process, and to be low when the behaviour is not socially observable. Conversely, a reliable innate tendency to cooperate could sustain interventions relying only on intrinsic rewards.

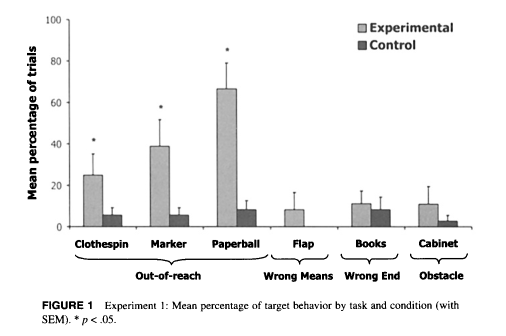

4.9.1 Young children respond to calls for help cues

In (Warneken and Tomasello 2007Warneken, Felix, and Michael Tomasello. 2007. “Helping and Cooperation at 14 Months of Age.” Infancy 11 (3): 271–94. https://doi.org/10.1111/j.1532-7078.2007.tb00227.x.) very young children (14 month old) were put in the same room as an experimenter. The experimenter faked having trouble completing a simple task, such as picking up an object or throwing a paper ball in a bin. When the experimenter expressed dismay at the situation, many children tried to help, even when provided with a new toy to play with.30 The same setting was tried on adult chimpanzees. Attempt to help were very rare. Presence of a parent seemed to make little difference, which would be expected if the cooperative behaviour was the consequence of education. This results dovetails with several other experiments showing that prosocial tendencies are observed at a very young age, with a significant innate component.

4.9.2 Reputation awareness emerges early

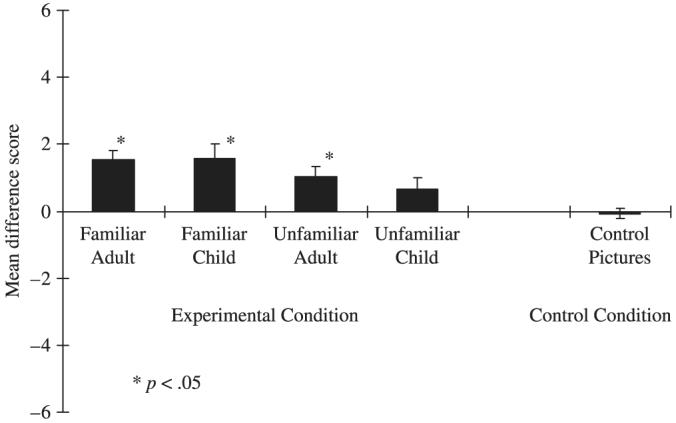

Figure 4.15: Main result from (Fu et al. 2007)

Figure 4.15: Main result from (Fu et al. 2007)

Reputation awareness seems to emerge between 3 and 5, which is fairly early in child development — especially when attention to reputation effects has been largely directed at teenagers. In (Fu and Lee 2007Fu, Genyue, and Kang Lee. 2007. “Social Grooming in the Kindergarten: The Emergence of Flattery Behavior.” Developmental Science 10 (2): 255–65. https://doi.org/10.1111/j.1467-7687.2007.00583.x.), experimenters ask children aged 5 to rate a picture, with the purported author either present or absent. Children give higher grades when the author is present, and when the author is familiar. This response is in line with reputation management, favourable grades providing opportunities for future interactions. This does not work with 3 years old children, which are insensitive to these conditions. It is not an ingroup/outgroup effect: in a complementary experiments, children who have to assign demerits do not select more often outgroup targets.

Since we know from the previous experiment (and others) than even infants are able to notice and act on other peoples’ feelings and needs (they have a sufficient theory of mind for that), we can assume even 3 years old children know the impact of their grades, but a consciousness of the social consequences, emerges only later, leading older children to bias their grades.

4.9.3 The pleasure of giving

Cross-section surveys consistently show a strong correlation between charitable donations and happiness, both at the individual and at the country level. Thus, the World Happiness Report uses the frequency of charitable giving as a proxy for social cohesion at country level (e.g. (Helliwell et al. 2023Helliwell, John F., Richard Layard, J. D. Sachs, Lara B. Aknin, Jan-Emmanuel De Neve, and S. Wang. 2023. World Happiness Report 2023. Sustainable Development Solutions Network; Sustainable Development Solutions Network. https://worldhappiness.report/ed/2023/.)).31 Of course, data availability plays a large role in this choice At an individual level, there is of course a common variable issue: richer people are on average happier and have more disposable income for charitable giving. However, (Aknin et al. 2013Aknin, Lara B., Christopher P. Barrington-Leigh, Elizabeth W. Dunn, et al. 2013. “Prosocial Spending and Well-Being: Cross-cultural Evidence for a Psychological Universal.” Journal of Personality and Social Psychology (US) 104 (4): 635–52. https://doi.org/10.1037/a0031578.) distinctly suggests that charitable actions indeed cause some happiness in the giver. In their experiment, they provided subjects with $20, with the instruction to either spend it on themselves (control group) or to allocated it to a charity in a preset list (treatment group). People giving money reported more positive feelings.

This experiment is impressive because the positive effects of giving are large enough to overcome the expected negative impact of loss aversion and endowment effect. Moreover, a purely instrumental approach to cooperation would have predicted that positive feeling should emerge only in response to gifts which provide some future advantage (building social ties, obligations or reputation), while the gift was anonymous in that case.

Figure 4.1: Birds in V formation. Cropped version of Birds V formation photo by Inu Etc, CC BY-SA 4.0, via Wikimedia Commons

Figure 4.1: Birds in V formation. Cropped version of Birds V formation photo by Inu Etc, CC BY-SA 4.0, via Wikimedia Commons

Figure 4.2: Not your typical orang-utan, but still very well-adapted to their ecological niche. The Morporker, by Gilles Roussel (Boulet), tribute to Terry Pratchett

Figure 4.2: Not your typical orang-utan, but still very well-adapted to their ecological niche. The Morporker, by Gilles Roussel (Boulet), tribute to Terry Pratchett

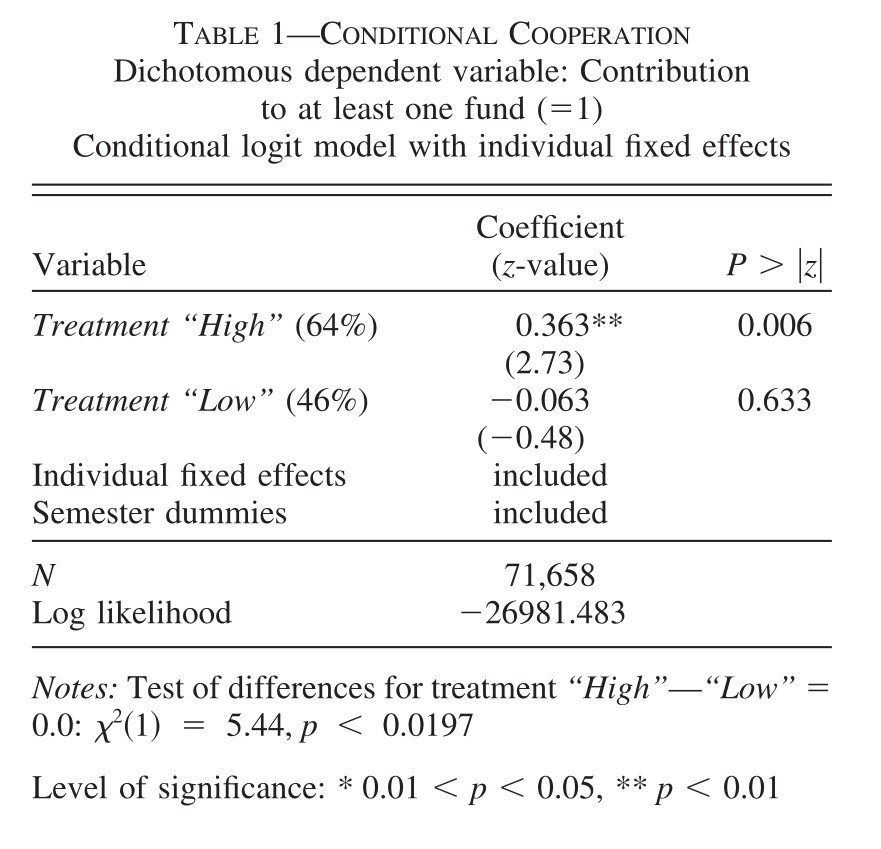

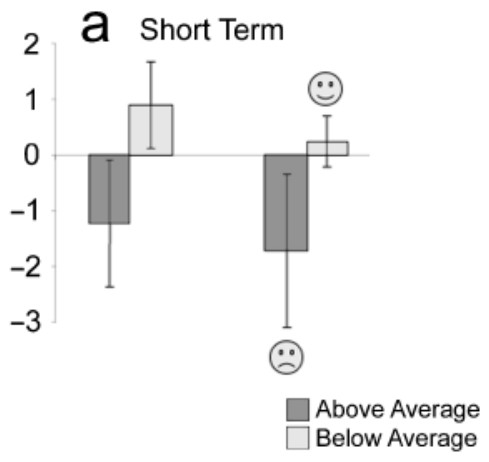

Figure 4.13: Main result of (Frey and Meier 2004)

Figure 4.13: Main result of (Frey and Meier 2004)

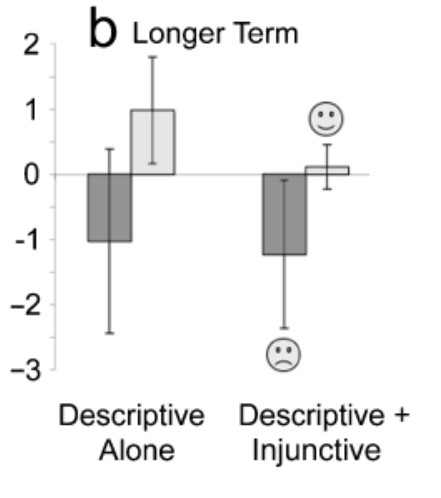

Figure 4.14: Building a new norm, one sign at a time

Figure 4.14: Building a new norm, one sign at a time