Chapter 2 Heuristics and models

2.1 Foreword

This chapter is the concatenation of what I covered in two separate classes in 2023. Students told me they were actually familiar with a significant share of the material, especially behavioural biases and general cognition models.

2.2 In a nutshell

For most people, behavioural public policy is exemplified by the nudge movement: small interventions in the choice environment which push you towards an option which is deemed better for yourself. This movement finds its roots in the dominance of the rational choice and expected utility frameworks. In a movement encompassing the XVIIIth, XIXth and most of the XXth centuries, the representation of what people should do (normative perspective) and actually do (descriptive perspective) as increasingly been dominated by the figure of the rational agent, or homo economicus. Economic policies were designed and assessed under the assumption that people will be fully aware of all their effect, and react to them in the best way for their own interests.

Starting in the 1950s, researchers in experimental economics and psychology began to document (and often rediscover) situations were actual choices differ from the prediction of rational choice theory. These effects were quickly labelled “biases”, since they were understood as deviation from the rational norm. They constitute are a large part of the toolbox of behavioural public policy. They highlight situations where people commonly err, or rather behave in a way which may not be in their best interest. Hence, the drive to gently help them, to nudge them, towards options more likely to be in their best interest.

Collecting unconnected experimental evidence to push back against the homo economicus dominance was initially necessary. At some point however, these findings need to be organized along a theory of their own. We do not have a definitive theory of human cognition, so you have to be familiar with several models and apply them where they are the most relevant.

This chapter is heavily influenced by the presentation given in (Oliver 2017Oliver, Adam. 2017. The Origins of Behavioural Public Policy. Cambridge University Press. https://doi.org/10.1017/9781108225120.).

2.3 Origins and limits of the rational choice theory

2.3.1 Before rationaly was the universal assumption

In their influential Nudge book (Thaler and Sunstein 2008Thaler, Richard H., and Cass R. Sunstein. 2008. Nudge: Improving Decisions about Health, Wealth, and Happiness. Yale University Press.), Thaler and Sunstein oppose policy-making designed for the homo economicus, embodying perfect rationality, and policies designed for actual homo sapiens. Since this opposition structures most of the field we should be aware where it comes from.

Going back to the Early Modern period, policy-making was a subset moral philosophy, then of political science. A keen knowledge of human behaviour was marked as a necessary quality of a sovereign in works as different in their perspective as Machiavelli’s The Prince7 Through this class, hyperlinks to Wikipedia, such as this one, are invitations to read the related article. It may not be directly linked to the subject matter besides my use in this text, but I deem they can provide you with useful background. and Erasmus’ The Education of a Christian Prince.8 I am fairly sure that comparable, and probably older texts exist in the Chinese, Indian and Islamic worlds. In the late XVIIIth century, Adam Smith saw his Theory of Moral Sentiments as his masterpiece, and considered The Wealth of Nations as a kind of derivative work. In his 1936 General Theory, Keynes explicitly states that key behaviour for economic activity, such as investment, stems not from cold rational decision, but from “animal spirits”:

Even apart from the instability due to speculation, there is the instability due to the characteristic of human nature that a large proportion of our positive activities depend on spontaneous optimism rather than on a mathematical expectation, whether moral or hedonistic or economic. Most, probably, of our decisions to do something positive, the full consequences of which will be drawn out over many days to come, can only be taken as a result of animal spirits – of a spontaneous urge to action rather than inaction, and not as the outcome of a weighted average of quantitative benefits multiplied by quantitative probabilities.

We see in this quote that Keynes was debating another position: rational choice theory.

2.3.2 From prescribing to assuming rationality

Rational choice theory has originates from XVIIIth mathematics, which tried to attach a monetary value (equivalent) to lotteries. They soon came up with expected value theory: if a lottery provides me with 1/2 chance of winning 10 and 1/2 of winning 0, I should be ready to pay 5 to play.

As intellectually clean as it could be, expected value theory failed to predict adequately how people actually behaved faced to actual situations. The most famous illustration is the St. Petersburg paradox, devised by mathematician Nicolas Bernoulli. The game is simple: the initial stake is 2, and is doubled every time a heads appears. The first time tails appear, the game ends and the player wins the current stake. How much would you be ready to pay to enter this game?

The monetary expected value of this game is:

\[\mathbb{E}\left(\sum_{i=1}^{\infty}\left(\frac{1}{2}\right)^n2^n\right) = +\infty\]

The actual willingness to pay is around 4.

Nicolas Bernoulli’s cousin, Daniel Bernoulli, and Gabriel Cramer came up separately with the idea that increasing amounts of money do not provide proportionately increasing levels of satisfaction: €1000 seem much more valuable when you already earn close to nothing than when you already earn millions. Daniel Bernouilli suggested that gains should be log-weighted (\(log\left(2^n\right)\) instead of \(2^n\) in the sum) and Gabriel Cramer made the same argument for \(\sqrt{2^n}\). Both solutions provide finite value expectations, and pave the way for expected utility theory.

The expected theory framework provided a formal foundation to the influential idea of John Stuart Mill that political science ought to consider people as rational agents, seeking to maximise their utility (a derivation from Benhtam’s utilitarianism). At that time, social sciences in general and economics in particular were looking for the possibility to uncover mathematically-expressed laws oh human societies in the same way natural sciences were making progress in this direction.9 Isaac Asimov’s Foundation cycle is one of the many illustrations of how strong the belief in the possibility of mathematical laws of society was, up to the second part of the XXth century. Under expected utility theory, a rational choice must respect four axioms, delineated mainly by John Von Neumann and Oskar Morgenstern:

- Completeness: I prefer x to y, y to x, or I am indifferent between the two.

- Transitivity: If I prefer an apple to a pear, and a pear to a grapefruit, then I must prefer an apple to a grapefruit: if \(A\geq B\) and \(B \geq C\), then \(A \geq C\).

- Continuity: Whenever there are three options such that \(x\leq{}y\leq{z}\), where \(\leq\) expresses the preference relation, then there exists a lottery between \(x\) and \(z\) with probability \(p\) such that \(px+(1-p)z\sim{}y\), i.e. I am indifferent between taking the lottery or getting \(y\) for sure.

- Independence: If I prefer \(x\) to \(y\), my ranking should not be affected by the addition of identical items on both sides: \[px+(1-p)z\geq{}py+(1-p)z\]

These axioms are respected by most mathematically tractable utility functions used in economic models, and they seem, at face value, rather innocuous. In practice, they also mean one can construct empirical utility curves by having people in lab settings expressing willingness to pay for various lotteries.

We should bear in mind that up to the 1960s, rational choice theory its expected utility expression were seen as a normative device. It was not assumed to describe accurately how people behaved, by how they should behave – in a moral sense derived implicitly from utilitarianism. Observed departures from expected utility predictions where this not widely seen as challenges, but as promising areas where people should be taught to make better choices for themselves.

Two other elements in the history of economic though have contributed to the rise of this framework up to near universal dominance. The first is the influence of the Chicago School of Economics, around Milton Friedman. Friedman argued, taking physics as a paradigm, that economic models should not be judged by the realism of their assumptions, but by their ability to make testable predictions. This sidelined the increasing body of evidence that people did not follow the rules of rational choice theory. Another important milestone is the Lucas critique, which states that one cannot predict the effect of an economic policy solely on the basis on past behaviour: people will adapt to the new policy in new ways. It thus required to always consider people’s best response to the new policy, e.g. asking for inflation-indexed wages if the government tried to use inflation to reduce real wages without touching to nominal wages.

2.3.3 Challenges to rationality

Out of the domain of macroeconomics10 At that time, macroeconomics held a dominant position and was often equated with economics as a whole., researchers piled evidence that many behaviours, including in highly-controlled experiments, could not be explained by expected utility theory, and were the norm rather than the exception.

Within economics, the Allais paradox (1953) already showed that the independence axiom is not as natural as it looks. Consider the following lotteries 1 and 2:

| 1A | 1B | 2A | 2B | ||||

|---|---|---|---|---|---|---|---|

| €1M | 100% | €1M | 89% | Nothing | 89% | Nothing | 90% |

| Nothing | 1% | €1M | 11% | €5M | 10% | ||

| €5M | 10% |

In laboratory settings, including when using small payments, most people prefer 1A to 1B (despite the higher expected value of 1B), which could be rationalized as risk aversion. The same people, however, generally prefer 2B to 2A. This couple (1A, 2B) violates the independence axiom. If we rewrite the payoffs:

| 1A | 1B | 2A | 2B | ||||

|---|---|---|---|---|---|---|---|

| €1M | 89 % | €1M | 89% | Nothing | 89 % | Nothing | 89% |

| €1M | 11 % | Nothing | 1% | €1M | 11% | Nothing | 1% |

| €5M | 10% | €5M | 10% |

The payoffs in italics are the same in 1A and 1B on the one hand, and 2A and 2B on the other hand. Under the independence axiom, they should not affect the true choice, which hinges on the two last lines, which are identical.

As theoretical as it may seem, Allais paradox highlight a phenomenon which is called a preference for certainty: when a certain option is offered, people choose as if they had a much higher risk aversion than when no such option is offered. This has a direct implication for policy: in most cases, the results of an existing policy is deemed to be known, while the outcome of a new policy is uncertain. This entails a preference for the statu quo, both from policy-makers and from people potentially affected by the policy.

Another significant challenge of that time was the work of Herbert Simon on limited rationality. His most influential insights for us is his departure from the assumption that people perfectly optimize over all possible alternatives. Since the brain is limited in time and resource, he argues, it is actually not best for it to always search for the best solution: it should instead reach a good enough one – satisficing instead of optimizing. The problem is to know what is good enough without actually computing all the outcomes. Simon’s point was that people use rules of thumb to decide what is satisfactory. In other words, the posited the mind uses simplified rules, heuristics to reach quickly near-optimal outcomes.

2.4 Behavioural biases

The rise to near-hegemony of rational choice theory11 Within mainstream economics. Marxian economics, a strong subfield at the time, and some of its offshoots, such as the Regulation school operated on a different basis. made it legitimate to document more widely and systemically instances where observed behaviours differs from what the expected utility theory would predict. Two fields progressed in more or less in parallel: experimental psychology and experimental economics. In this class, I will insist on the former, for two reasons:

- Key results were obtained in the 1970s onward, while landmarks in experimental economics are more recent (1990s and 2000s).

- Experimental psychology results are much more broadly known, and fixed a large part of the terminology and concepts widely used in behavioural public policy.

For those willing to explore the experimental economics side, (Schimmelpfennig and Muthukrishna 2023Schimmelpfennig, Robin, and Michael Muthukrishna. 2023. “Cultural Evolutionary Behavioural Science in Public Policy.” Behavioural Public Policy, January 24, 1–31. https://doi.org/10.1017/bpp.2022.40.) provide a useful list of landmark results.

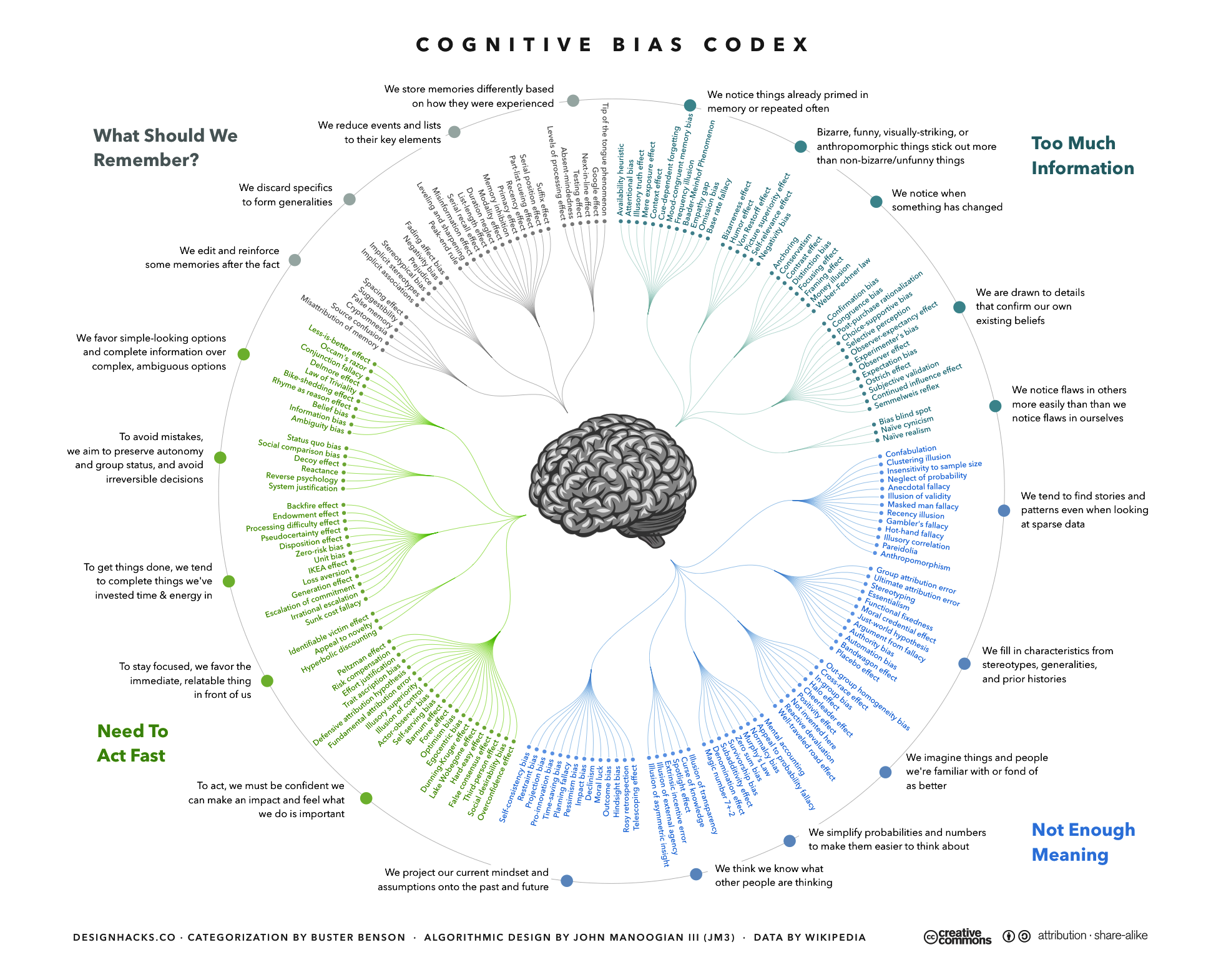

The term cognitive (or behavioural) bias to refer to situation where observed behaviour departs from expected utility theory was coined in 1972 by Daniel Kahneman and Amos Tversky and soon became common currency. As I hinted in the introduction, there are now thousands of papers documenting such situations12 You are probably aware of the Replication crisis which plague the field. Any single study should be taken with more than a grain of salt., to the extent that it becomes difficult to maintain a reasonably complete list of currently accepted biases, much less a well-structured one. For the visualisation below, the author used a classification algorithm relying on biases described in the english-speaking Wikipedia as of 2016.

Figure 2.1: An algothrimically-assisted classification of behaviours labelled as biases in Wikipedia, Jm3, CC BY-SA 4.0

Several behavioural public policy classes I have reviewed preparing this class devote a significant share of their time to exploring in detail part of this codex. In some respect, it indeed represents the basic toolbox for behavioural interventions, providing you with the concepts to analyse the problem and levers towards behavioural change. In my opinion, there is limited value of me walking you through these: accessible descriptions are often good enough, and the lack of an overall organizing framework – a core issue of the bias-list version of behavioural science – make it cognitively difficult to memorize more than a few of them. Thus, I’ll limit myself here to presenting a small selection of the more commonly encountered biaises in the literature.

2.4.1 Status quo

The status quo bias refers to the tendency to prefer the current situation to another which would require conscious action, however minimal. A common example is that if an option is pre-selected (opt-out), people are much more likely to take it than if they have to select it actively (opt-in).

Figure 2.2: Model cookie banner with pre-checked options.

Figure 2.2: Model cookie banner with pre-checked options.

We are all familiar with uses of this bias through digital interfaces, for example for cookie consent form where the default is to have everything selected, and you have to de-select manually cookies you want to avoid. This design has been outlawed by the European Court of Justice in 2019, following the GDPR regulation. We’ll go back to it in the class about Dark Patterns.

Famously, it is also the main lever of the famous Save More Tomorrow experiment (Thaler and Benartzi 2004Thaler, Richard H., and Shlomo Benartzi. 2004. “Save More Tomorrow™: Using Behavioral Economics to Increase Employee Saving.” Journal of Political Economy 112 (S1): S164–87. https://doi.org/10.1086/380085.).13 This experiment is one of success stories of the nudge era. Here is an ungated version of the paper. In the US, many people are aware that their rates of savings are lower than what rational financial planning would advise, but fail to act on this. This intervention introduced (among other devices) automatic enrollment: when signing their work contract, new workers were automatically enrolled in a company-sponsored saving plan (a 401(k) scheme). While they retained the option to out out, the participation rate jumped form 64% to 81%, with few people opting out later.

2.4.2 Loss aversion

Loss aversion refer to the observation that people give more weight in their decisions to (relatively small) losses than to gains of comparable magnitude. Many experiments describe this phenomenon using lab lotteries, but the best known experiment is described in (Kahneman et al. 1990Kahneman, Daniel, Jack L. Knetsch, and Richard H. Thaler. 1990. “Experimental Tests of the Endowment Effect and the Coase Theorem.” Journal of Political Economy 98 (6): 1325–48. https://www.jstor.org/stable/2937761.). The papers describes a series of experiments, but the more famous is the mug one. In a class of 44 Cornell undergraduate law students, they distribute 22 Cornell-branded mugs (list price $6), and let students state how much they’d be willing to pay, and at what price they’d be willing to sell. Since the mugs are distributed randomly, expected utility theory predicts an average on 11 trades. Over 4 trials, the actual number of trades is between 1 and 4. Median buyer reservation price was around $2.5, median seller reservation price $5.25. In other terms, students what had gotten the mugs for free valued them twice as much as those who did not: they felt selling under the retail price was to incur a loss, even if they would have more use of a lower cash amount that of the mug itself.14 Loss aversion can be understood as the explanation for the endowment effect

In daily life, we see that people are more ready to spend time and effort to avoid spending relatively small amounts of money, e.g., finding a free parking space rather than paying a parking fee, and fail to exert comparable effort to gain somewhat larger sums, e.g., mail-in rebates.

2.4.3 Representativeness

The representativeness heuristic is a blanket term which covers the fact that preconceptions we have over a situation can override hard facts in our assessment. A famous illustration is the Linda problem, from (Tversky and Kahneman 1983Tversky, Amos, and Daniel Kahneman. 1983. “Extensional Versus Intuitive Reasoning: The Conjunction Fallacy in Probability Judgment.” Psychological Review (US) 90 (4): 293–315. https://doi.org/10.1037/0033-295X.90.4.293.) (ungated)15 In the actual experiment, they were more statements. This excerpt uses only those of interest for the result.:

Linda is 31 years old, single, outspoken and very bright. She majored in philosophy. As a student, she was deeply concerned with issues of discrimination and social justice, and also participated in anti-nuclear demonstrations. Rank the following statements in order on how well they apply to Linda now:

Linda is active in the feminist movement

Linda is a bank employee

Linda is a bank employee and active in the feminist movement

Simple probability tell us that statement 2 is necessarily more probable than statement 3, since 3 is strictly included in 2. However, 85% of the 88 students of the experiment ranked 3 as more probable than 2 (conjuction fallacy).

Policy-wise, this heuristics pervades choices and behaviours. For example, job consultants at Pôle Emploi told me that they often caught themselves directing young people towards gender-typed employment, even if they had the information that, for example, nursing homes needed strong people able to lift and carry people around, or that security companies were looking for female personnel.

2.4.4 Availability

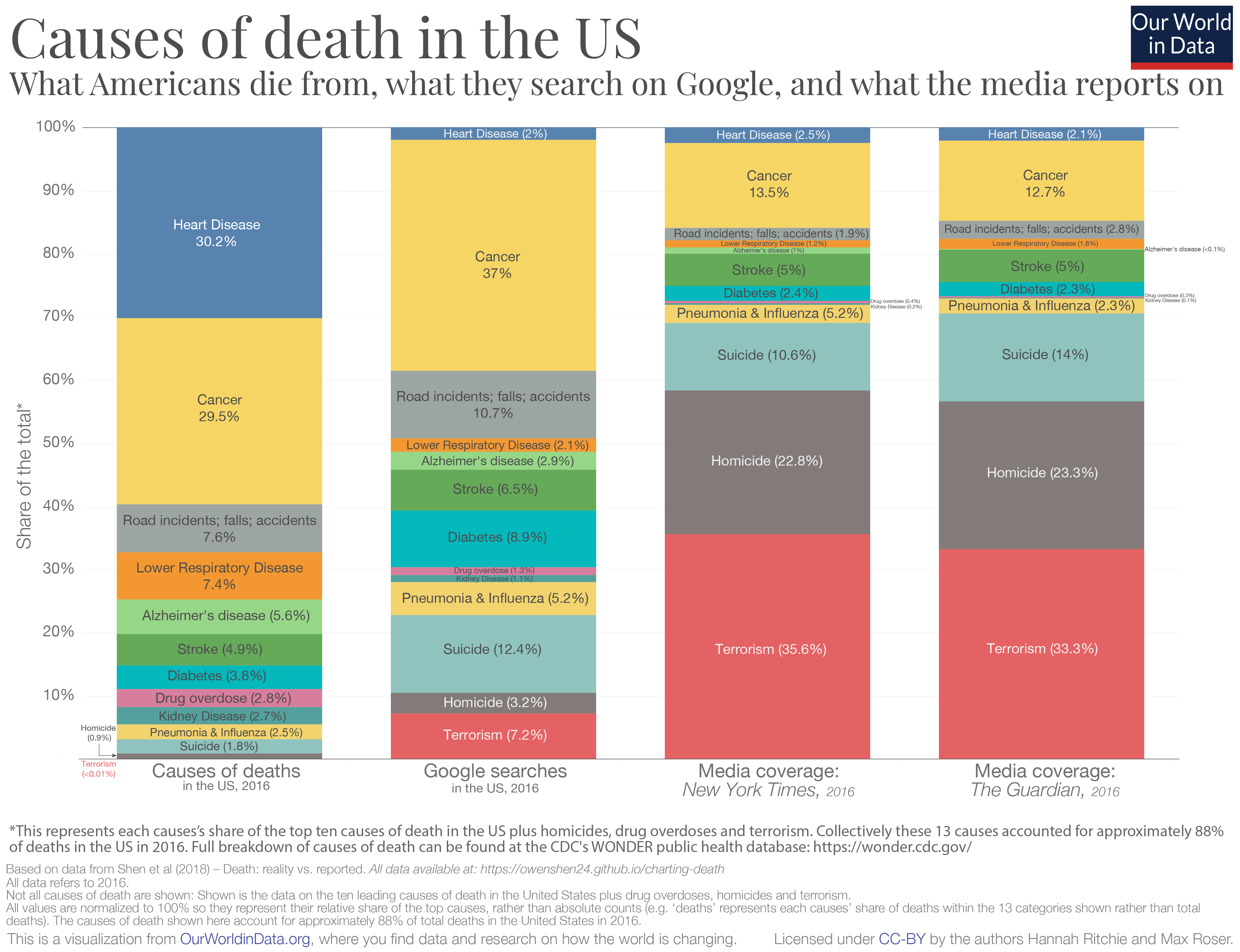

The availability heuristic refers to the intuitive tendency to evaluate a situation using the first example which comes to mind rather than a careful review of all the information available. It is closely linked with the concept of salience: if a phenomenon has a higher media coverage, people will deem it more frequent than another will less media coverage.

For example, let us consider death caused by an animal. Which animals cause the most death worldwide? And if you exclude disease-bearing animals, how would you rank sharks, dogs, scorpions and hippopotamuses?

Figure 2.3: An visualization of the deadliest animals, CC-BY-SA Hanna Richtie and Mark Roser, Our World in Data

Closer to us, here are a few causes of death in France in 2020. How would you rank them from more frequent to less frequent?

Covid-19, Cancers, Murders, Infectious and parasitic diseases (except Covid-19), Road accidents, Cardiovascular pathologies, Self-harm

Here is the ranked table (source Insee, except for homicides.)

| Cause | ## of deaths (1,000s) |

|---|---|

| Cancers | 167.6 |

| Cardiovascular pathologies | 131.7 |

| Covid-19 | 68.8 |

| Infectious and parasitic diseases (non-Covid-19) | 10.7 |

| Self-harm | 8.8 |

| Road accidents | 2.0 |

| Murders | 0.9 |

This biases has a pervading effect on public policy. At the first order, it tends to direct public attention and thus resources to issues which are more salient, with little regard to their actual relevance or the efficiency of the use of these resources. In France, an example is the Vigie Pirate program. According to most security experts, the deterrence effect of soldiers in the street is negligible, while the program is a huge drain on human and material capabilities of the French army.

The authors of the Our World in Data website have constructed an infographic comparing actual causes of death in the US and the media coverage of these subjects by two reputable news source in 2016 (The New York Times and The Guardian), as well as Google searches. It shows that reporting is heavily skewed towards violent deaths, with a low media profile of cardiovascular diseases, although they are the leading cause of death in the US.

Figure 2.4: An visualization of causes of death and media coverage in the US, CC-BY-SA Hanna Richtie and Mark Roser, Our World in Data

2.4.5 Anchoring

The anchoring bias refers to situations where a decision are influenced by a reference point, which may not be relevant or unbiased with regards for the decision. An example can again be found in (Kahneman et al. 1982Kahneman, Daniel, Paul Slovic, and Amos Tversky. 1982. Judgment under uncertainty: heuristics and biases. Cambridge University Press.). They asked students to quickly evaluate the value of 8!, presenting it either in an ascending or a descending order:

A: 1x2x3x4x5x6x7x8

D: 8x7x6x5x4x3x2x1

Students presented with the ascending order gave much lower estimates (median 512) that students presented with the descending order (median 2,250, the true answer is 40,320).This effect is so well-known that business schools teach it as a negotiating strategy: be the first to state a price (or a wage), since it shifts to other people will use it as an anchor for their counter-proposals.

2.4.6 Overconfidence

Overconfidence is our tendency to overestimate our ability, skill or degree of confidence in our answers. Again, there is a canonical illustration: in a 1981 survey, (Svenson 1981Svenson, Ola. 1981. “Are We All Less Risky and More Skillful Than Our Fellow Drivers?” Acta Psychologica 47 (2): 143–48. https://doi.org/10.1016/0001-6918(81)90005-6.) found that 93% of American drivers rate themselves as better driver than the median driver – an impossibility (see also Dunning–Kruger effect.

Overconfidence is an issue in public policy because it affects how we react to some messages. If deem myself a good driver, I do not need to pay attention to messages telling people how to drive better: they are for bad drivers, not for me. Overconfidence also commonly affect decision-makers, who underestimate they grasp of a problem, a situation, and even you concept you are experts on.16 Mansplaining is a word coined for the situation where a man explain with a condescending tones what she knows better than he does.

2.4.7 Framing

The Framing effect reflects the fact we make different choices according not only to the options we face, but also to the way they are presented. Let us again take an example from our usual suspects (Tversky and Kahneman 1981Tversky, Amos, and Daniel Kahneman. 1981. “The Framing of Decisions and the Psychology of Choice.” Science 211 (4481): 453–58. https://doi.org/10.1126/science.7455683.). A pandemic is about to hit the US, with an expected death toll of 600 people. There are two possible treatment, and there are time and resources only to develop and deploy one:

A: 200 people are saved.

B: 1/3 probability all 600 people are saved, and 2/3 that the treatment is useless, and no one is saved.

Now, consider the alternative formulation:

A*: 400 people will die

B*: 1/3 probability no one will die, and 2/3 probability 600 people will die.

In their sample, 72% of people exposed to the first alternative preferred A, and 78% on those exposed to the second preferred B*.

Figure 2.5: Sample drinks menu from Starbucks

Figure 2.5: Sample drinks menu from Starbucks

The power of framing effects is commonly leveraged by private companies to direct clients towards higher value-added products. Starbucks is a known example. At one point, they offered three sizes for drinks. In this case, the framing resides on the pricing. As we see in the picture, the middle (Medium size) option, at $5.5, is not a serious business proposition, since the Large one is at $6. It acts as a decoy. On the one hand, it distracts from the small / large choice, by offering and intermediate option which makes the small one look possibly too small. Then the pricing makes it look like a good deal to take a large for only $0.5 more. A few years later, they went further by offering and even larger size, the Venti, and removing the small size from their menu (although you could still order it). This made the large option even more attractive, as it appears as the middle, balanced option.

2.4.8 Confirmation bias

Confirmation bias is the tendency to process information in a way which confirms, rather than challenge, one’s prior beliefs, values or intuitions. The term itself was coined by psychologist Peter Cathcart Wason in his 1960 paper (Wason 1960Wason, P. C. 1960. “On the Failure to Eliminate Hypotheses in a Conceptual Task.” Quarterly Journal of Experimental Psychology 12 (3): 129–40. https://doi.org/10.1080/17470216008416717.). In this paper, he identifies a procedural bias. On the basis of triplets of digits, e.g., (2,4,6), people are tasked to infer the rule the triplets follow. They can do so by presenting examples of triplets to the experimenter, who tells them whether their examples follow the rule or not. Wason show that his subject tend to submit examples which conform with the rule they’re envisaging, where it would be more efficient to submit non-conforming examples (one case is enough to reject a rule, while a positive case does not exclude alternative rules).

The observation that we use the powers of reason first to confirm our beliefs is naturally much older, and you can find illustrations – usually to condemn this tendency – from most period and cultural areas. According to (Mercier and Sperber 2019Mercier, Hugo, and Dan Sperber. 2019. The Enigma of Reason. Harvard University Press.), this is actually not a bug, but a feature of human cognition.

2.5 Policy use of behavioural biases: the Nudge movement and its criticisms

2.5.1 A nudge fad?

From a practical point of view, biases such as these will be a large part of your toolbox, both from a analytical and from a solution design point of view. They lie at the core of most interventions described in Nudge: making calorie-rich dessert option less salient by putting them on the second row, change savings plan from opt-in to opt-out.

2.5.2 Criticisms

The nudge movement has attracted a set of (valid) criticisms. I lift the following list from (Hallsworth 2023aHallsworth, Michael. 2023a. A Manifesto for Applying Behavioural Science. The Behavioural Insights Team. https://www.bi.team/publications/a-manifesto-for-applying-behavioral-science/.) (in article format (Hallsworth 2023bHallsworth, Michael. 2023b. “A Manifesto for Applying Behavioural Science.” Nature Human Behaviour 7 (3, 3): 310–22. https://doi.org/10.1038/s41562-023-01555-3.)):

- Limited impact

- The approach has focused on more tractable and easy-to-measure changes at the expense of bigger impacts; it has just been tinkering around the edges of fundamental problems.

- Failure to reach scale

- The approach promotes a model of experimentation followed by scaling, but it has not paid enough attention to how successful scaling happens—and the fact that it often does not happen.

- Mechanistic thinking

- The approach has promoted a simple, linear and mechanistic approach to understanding behaviour that ignores second-order effects and spillovers (and employs evaluation methods that assume a move from A to B against a static background).

- Flawed evidence base

- The replication crisis has challenged the evidence base underpinning the behavioural insights approach, adding to existing concerns such as the duration of its interventions’ effects.

- Lack of precision

- The approach lacks the ability to construct precise interventions and establish what works for whom, and when. Instead, it relies either on overgeneral frameworks or on disconnected lists of biases.

- Overconfidence

- The approach can encourage overconfidence and overextrapolation from its evidence base, particularly when testing is not an option.

- Control paradigm

- The approach is elitist and pays insufficient attention to people’s own goals and strategies; it uses concepts such as irrationality to justify attempts to control the behaviour of individuals, since they lack the means to do so themselves.

- Neglect of the social context

- The approach has a limited, overly cognitive and individualistic view of behaviour that neglects the reality that humans are embedded in established societies and practices.

- Ethical concerns

- The behavioural insights approach will face more ethics, transparency and privacy conundrums as it attempts more ambitious and innovative work.

- Homogeneity of participants and perspectives

- The range of participants in behavioural science research has been narrow and unrepresentative; homogeneity in the locations and personal characteristics of behavioural scientists influences their viewpoints, practices and theories.

Rather that treating these criticisms in a dedicated rebuttal section, the following lectures will show how a proper application of behavioural knowledge answers to these points or at least alleviates them.

2.6 On the usefulness of a model of behaviour

Consciously or not, every policy is grounded in a model of human behaviour. By construction, you design a ban or a mandate expecting to reduce or foster a given behaviour. Even in cases where the is no specific behavioural target, for example a non-Pigouvian, pure revenue-raising tax, reactions to the tax are commonly expected and modeled.

Figure 2.6: Oude Turfmarkt 141-147, by Massimo Catarinella, CC BY-SA 3.0

Figure 2.6: Oude Turfmarkt 141-147, by Massimo Catarinella, CC BY-SA 3.0

Such behavioural adaptations to tax have long-lasting effects. In the 17th century, Amsterdam set up a city tax proportional to the street-facing width of the building. As a result, even rich merchants constructed houses tall, deep, and narrow, with the upper storeys serving as warehouses. This fiscal policy shaped Amsterdam’s distinctive building style.

2.6.1 The Cobra effect

Using an implicit, rather than explicit, model of behaviour is a recipe for failure. Most notably, is exposes you to the Cobra effect, the situation where the behavioural response to your policy makes the initial problem worse. The name stems from a chapter in (Levitt et al. 2006Levitt, Steven D., Stephen J. Dubner, and Anatole Muchnik. 2006. Freakonomics. William Morrow.). The story is set in British-controlled India. The capital, Dehli, was plagued by cobras. In order to reduce their number, the British authorities started offering a bounty for each dead cobra. Soon enough, smart Indians set up profitable cobra farms – much more easy to catch and kill than the wild ones. Upon understanding this, the British cancelled the bounty scheme. Cobra farmers then released their now-worthless snakes, leading to an steep increase in the cobra population in the city.

French people love this story, if only for the occasion to have a laugh at the expense of the British. The thing is that there seems to be no trace of the episode in the records of colonial-era India.17 Granted, the British did burn a lot of archives just before Indian independence, and may have erased traces of what was not their finest moment. There is however good evidence that the French authorities made the very same error in Hanoï around 1902 (Vann 2003Vann, Michael G. 2003. “Of Rats, Rice, and Race: The Great Hanoi Rat Massacre, an Episode in French Colonial History.” French Colonial History 4 (1): 191–203. https://doi.org/10.1353/fch.2003.0027.). The colonial French had just built a state-of-the art district (intended for white expatiates), with this fundamental feature of civilization: sewers. This made the city a fertile breeding ground for rats: traditional neighbourhoods, with poor sanitation and garbage collection, provided food, while the modern sewers provided protection from predators. The situation was made worse by a resurgence of the plague. Faced with a strike from local rat exterminators, the French authorities offered a bounty for rat tails. Soon enough, rat farms appeared, and tailless rats were seen running around: Viet people preferred to allow rats to breed rather than killing the resource. The French removed the bounty, and spend months to subdue the plague epidemic.

What happened? The colonial civil servants implicitly used a poor model of locals’ rationality. They anticipated a simple response to the economic incentives, and not a full profit-maximizing behaviour. Obviously, the colonial context and its disdain for local populations’ capacities played a role. We are however not immune to these effects in current societies. Closer to us, (Bénabou and Tirole 2003Bénabou, Roland, and Jean Tirole. 2003. “Intrinsic and Extrinsic Motivation.” The Review of Economic Studies 70 (3): 489–520. https://www.jstor.org/stable/3648598.) is another infamous illustration. In the 1990’s, the Swedish authority for blood donations tries to attract more donor by offering money. The number of donors and the quality of blood collected plummeted. They found that the suspicion of giving blood for money crowded out usual donors’ intrinsic motivation, along with a reluctance to appear as needing the money. On the other hand, it attracted people in desperate need of money, including drug addicts, which explained the issue with blood quality.

A third example is what happened during the Covid-19 pandemic. Even with the warnings about vaccine hesitancy around the measles vaccine, the backlash against the Covid-19 vaccine tool many public health professionals by surprise. We have a worldwide pandemic with a significant death toll: obviously, they thought, people will be more than ready to take shot, it is the only rational thing to do. In France, this phenomenon has led Santé Publique France, the French public health authority, to reinforce its behavioural analysis capabilities.

2.6.2 A list of biases is not a model of human behaviour

A list of behavioural biases falls short of being a proper model of human behaviour. All you know is that people use different decision rules than rational choice is a (usually rather specific) set of circumstances. This provides you with no insight as why they deviate from the rational choice, nor how a change in the environment may significantly affect their choices. Furthermore, bias lists can foster the idea that people are irrational (and (Ariely 2010Ariely, Dan. 2010. Predictably irrational: the hidden forces that shape our decisions. HarperCollins publishers.) title Predictably Irrational does not help), which undermines claims to agency (see (Banerjee et al. 2024Banerjee, Sanchayan, Till Grüne-Yanoff, Peter John, and Alice Moseley. 2024. “It’s Time We Put Agency into Behavioural Public Policy.” Behavioural Public Policy 8 (4): 789–806. https://doi.org/10.1017/bpp.2024.6.) on the need to include this dimension in behavioural public policy).

In order to properly design an intervention, you need a working model of the subset of human behaviours you have to deal with.

2.7 Various models for various needs

All models are wrong, but some are useful

For someone trained in economics, the quotation from George Box at the beginning of this document is common knowledge. You cannot possibly expect to have a complete model of the economy. When it comes to analysis or policy advice, choosing the right model is a most relevant skill. The same applies with behavioural public policy. We have no complete model of human cognition, so we have to choose the most relevant ones for the task at hand.

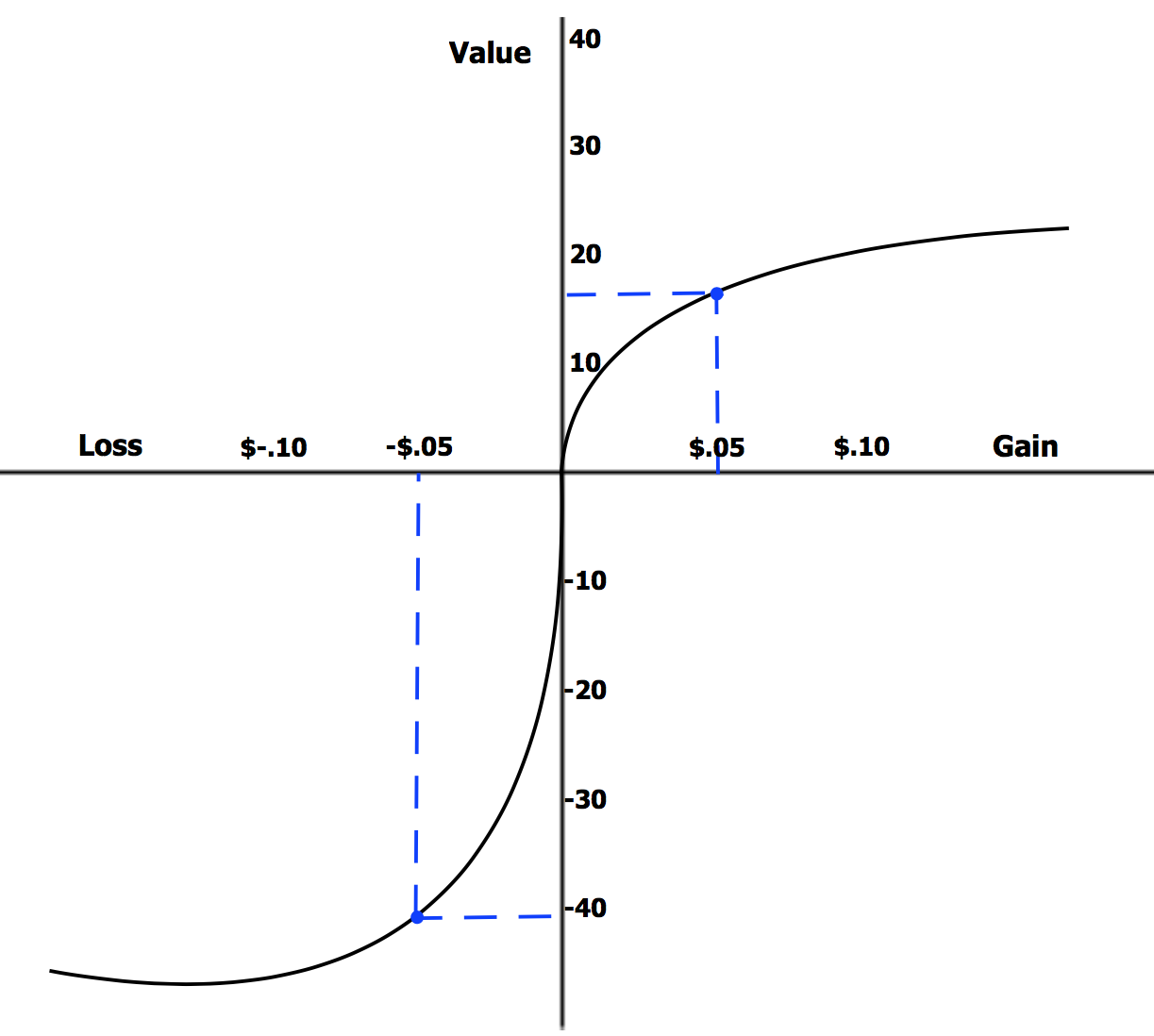

2.7.1 Prospect theory

Prospect theory is probably the most accepted theory which accounts for a set of the biases and heuristics listed in the previous class (Kahneman and Tversky 1979Kahneman, Daniel, and Amos Tversky. 1979. “Prospect Theory: An Analysis of Decision Under Risk.” Econometrica 47 (2): 263–91. https://doi.org/10.2307/1914185.).

Figure 2.7: Shape of the utility function according to the prospect theory. Laurenrosenberger, CC BY-SA 4.0

Figure 2.7: Shape of the utility function according to the prospect theory. Laurenrosenberger, CC BY-SA 4.0

The curve relating utility (Value on the figure) to gains or losses retains the classical tapering shape at its extremities, corresponding to decreasing marginal utility. It bears however significant differences:

- The origin (0) point is not an absolute 0 payoffs. It is determined in a first stage assessment as the relevant reference point, from which gains and losses will be computed. It can be my current situation, but also what I observe to be the norm for people like me, or what I assume that I deserve (hence the term prospect).

- The curve is asymmetrical near the reference point: losses carry an higher weight that comparable gains.

- The curve is concave in the domain of gains – reflecting risk aversion – and convex in the domain of losses – reflecting risk-seeking.

This shape accommodates the central features of the main departures from expected utility documented at the time. The asymmetrical shape leads directly to loss aversion. If the calibration of the reference point is indeed sensitive to the situation at the moment of choice, this provides the endowment effect: in the mug experiments, students getting the mug for free set a reserve price close to the list price of the mug, and any offer lower than that registered as a loss relative to that (hypothetical) sale price.

Integral to prospect theory is also a departure from the Von Neumann-Morgenstern framework of using objective probabilities when evaluating lotteries. On the basis of observed lotteries choices, prospect theory states that people:

- Overweight small probabilities: a 5% chance subjectively looks more like 10%.

- Underweight high probabilities: a 99% chance subjectively looks more like 90%

Combined with the shape of the utility function, a skewed assessment of probabilities models the most commonly observed reversals of preference for risk:

| Gains | Losses | |

|---|---|---|

| High probability | Risk aversion | Risk seeking |

| Fear of losing out gain | Hope to avoid loss | |

| Low probability | Risk seeking | Risk aversion |

| Hope of gain | Fear of loss | |

The top left cell of the table corresponds to the first gamble of the Allais paradox: people prefer €1M for sure than a lottery with a higher expected gain, but with a 1% chance of getting nothing. The bottom left corresponds to the second gamble. The probability of gains is now low, and people switch to risk-seeking.

| 1A | 1B | 2A | 2B | ||||

|---|---|---|---|---|---|---|---|

| €1M | 100% | €1M | 89% | Nothing | 89% | Nothing | 90% |

| Nothing | 1% | €1M | 11% | €5M | 10% | ||

| €5M | 10% |

Now, replace the values in the table by penalties. Most people will prefer 1B over 1A: unless you’re rich to begin with, €1M or €5M to pay will consume most of your lifetime earnings, so it is worth it to try for the minuscule 1% chance of avoiding catastrophe. Arguably, 2A would be generally preferred to 2B on the grounds that the adverse outcome is slightly less bad.

As these kind of model goes, prospect theory is one of the best behaviourally-informed model of choice: it is rather parsimonious and describes a reasonably wide array of phenomena. Part of its success (and its eventual recognition by a Nobel Prize) also lies in the fact that the choices elicited in laboratory held in the field. On the bases of prospect theory, experimenters compared weight loss or smoking cessation programs with certain payoffs (conditional of having respected a weight target or a number of non-smoking days) to programs with lotteries of the same expected value. They observed that entering people in a lottery with 1% chance of winning $300 provided a stronger incentive than promising $3 for sure if the person hit their target.

2.7.2 Dual-system theories

The other workhorse model in behavioural public policy is Kahneman’s System 1 / System 2 described in (Kahneman 2011Kahneman, Daniel. 2011. Thinking, fast and slow. Allen Lane.). A large part of the popular success of this model lies in its simplicity. Contrary to prospect theory, it does not imply a representation of utility or risk weighting. It simply posits that the human brain has two main modes, or systems, to make choices. In a nutshell:

Figure 2.8: Cover of the Thinking, Fast and Slow book.

Figure 2.8: Cover of the Thinking, Fast and Slow book.

| System 1 | System 2 | |

|---|---|---|

| Fast, Intuitive | Slow, reflexive | |

| Unconscious, Low cost | Conscious, High cost | |

| Fight of flight | Serious conversation | |

| Read a STOP sign | Play a new game | |

| 2+2 = … | Choose a mortgage | |

| Heuristics | Reason, logic |

System 1 functions by associating an incoming information to a known pattern, prompting the response associated with that pattern. In other words, they follow heuristics. This accounts for instinctual responses, such as the fight or flight reflexes, and acquired ones, such as reading a simple text or associating any red sign with danger and stopping. A key element of this model is that System 1 can be trained, as demonstrated by reading, driving a car or riding a bike. Behavioural biases can thus be seen as errors made by System 1 heuristics relative to what the rational System 2 would have done had it been activated. For example, the Linda problem (conjunction fallacy) stems from the fact that the question looks like a familiar identification problem and not from a more unfamiliar exercise on conditional probabilities, which should have been addressed by System 2.

Conversely, Kahneman observed that information (or cognitive) overload can lead to default the decision to System 1. Choosing a new apartment is the canonical example. You have to consider the rent or the price, closeness to work, shops and amenities, public transportation, surface, orientation, unreliable information about the neighbours and the neighbourhood, state of the plumbing and electrical system, layout, etc. Overwhelmed by all this information, it is easy to default and let System 1 choose on its heuristics, such as the likeability of the real estate agent or the colour of the shutters. In this perspective, mandatory cooldown delays, such as the ones you have in France before actually buying a home, are example of behavioural devices older than this theory, whose function is purported to give more time to conscious thought, a.k.a System 2, to correct System 1 errors. (Mercier and Sperber 2019Mercier, Hugo, and Dan Sperber. 2019. The Enigma of Reason. Harvard University Press.) would contend that the efficacy of these can be limited, as reason is also very efficient in coming up with plausible rationales in support of a pre-existing option.

This model describes a common feature of behavioural biases: biases occur overwhelmingly in situation which are out of our daily experience. When an elements becomes a recurring feature of our life, for example reading, learning refines our heuristics to the point where their are fit for purpose. Biases appear when we encounter a new situation, and when we do not have many previous occasions to learn from. Uncommon, significant and complex decisions, from hiring to buying a house, are thus fertile grounds for biases.

Of course, we should remain aware that this is just a model. In behavioural public policy settings, it is useful in part because it maps very well with preconceptions about the idea that policies should foster the use of reason (identified with System 2). In terms of research, (De Neys 2021De Neys, Wim. 2021. “On Dual- and Single-Process Models of Thinking.” Perspectives on Psychological Science: A Journal of the Association for Psychological Science 16 (6): 1412–27. https://doi.org/10.1177/1745691620964172.) argues that the is currently no conclusive evidence in favour of either dual-systems or single-system (where differences are of degree and not of kind).

2.7.3 Self-determination theory

Self-determination theory is often drawn upon in behavioural public policy to analyse and act on observed gaps between intention and action, and to anticipate the behavioural outcome of monetary incentives. The terms actually refers most of the time to the organismic integration theory of motivation described in (Deci and Ryan 1985Deci, Edward L., and Richard M. Ryan. 1985. Intrinsic motivation and self-determination in human behavior. New York, Etats-Unis d’Amérique.) and (Ryan and Deci 2000Ryan, Richard M., and Edward L. Deci. 2000. “Self-Determination Theory and the Facilitation of Intrinsic Motivation, Social Development, and Well-Being.” American Psychologist (US) 55 (1): 68–78. https://doi.org/10.1037/0003-066X.55.1.68.). The following table sum up the main factors of behaviour motivation, from no motivation (top) to self-determined (bottom). They establish a continuum:

- Behaviours I undertake because I am forced to (external motivation, e.g., one thinks smoking cannabis should be legal, but refrains from smoking out of fear of police control).

- Behaviours I know I should have, but must force myself to do and do not feel they are part of my identity (e.g., grading).

- Behaviours I actively want to have, because I value them, but still find costly (e.g., going to the gym).

- Behaviours I feel I part of what I am and want to be (e.g. providing full handouts).

- Behaviours I have because they are part of my identity and in which I engage for their own sake (e.g, aikido training).

| Motivation | Type | Source | Regulation |

|---|---|---|---|

| A-motivation | Non-regulation | Impersonal | Non-intentional, |

| Non-valuing, | |||

| Incompetence, | |||

| Lack of control | |||

| Extrinsic | External | External | Compliance, |

| External | |||

| rewards and | |||

| punishments | |||

| Introjected | Self-control, | ||

| Ego-involvment, | |||

| Internal rewards | |||

| and punishments | |||

| Identified | Personal | ||

| importance, | |||

| Conscious | |||

| valuing | |||

| Integrated | Internal | Congruence, | |

| Awareness, | |||

| Synthesis | |||

| with self | |||

| Intrinsic | Intrinsic | Internal | Enjoyment, |

| Inherent | |||

| satisfaction |

One example of application of this framework is in intervention with job-seekers. A common complain from job counsellor is a some people listed as job-seekers perform job-seeking activities under pressure of sanctions (typically a penalty on their unemployment allowance). As a result, their efficacy is low, and they fail to find jobs or train new skills. Counsellors are thus often looking for ways to move job-seeking from an externally-mandated activity to an integrated of an identified one. Such interventions may rely on dealing with self-esteem or locus of control issues among job-seekers.

Another example is the behavioural analysis of incentives. As we saw in the blood donation case, one type of motivation may crowd out others. This is particularly the case with the applications of new public management to public service delivery. It has been showed that relying on few quantitative measures leads to decrease in performance in roles where personal appreciation of complex cases or creativity is required. As with the blood donation, performance-based rewards can crowd out more self-determined motivation for doing the job well, e.g., find the best solution for a family in distress.

2.8 The evolutionary perspective

As useful as they are, prospect theory or dual system models mainly kick the can further down the road when it comes to explaining why most people use some heuristics rather than other possible ones, and why observed heuristics fail to provide an adequate response in some contexts but not in others. The cost element of the System 1 / System 2 model restored some rationality in the overall understanding by positing that the choice between one system and the other (a kind of meta-heuristic) followed a cost-error trade-off: System 1 is more error-prone, but System 2 is more costly. This begs the question of the relevant cost: time, calories, attention are plausible candidates depending on the context.

The evolutionary approach provides an overarching framework to answer these questions. Firstly, it provides a cost-benefit measure through reproductive fitness: the net gain or cost of a behaviour or an heuristic is its impact of the person’s reproductive odds in the given context. Secondly, it posits that heuristics are selected through a mechanism of natural selection: people using more adaptive heuristics at a given point are more likely to reproduce, and thus to pass on these heuristics to their offspring. Where a simple cost-benefit optimization theory would predict a convergence towards the same heuristics, evolutionary theory can accommodate the coexistence of a wide array of heuristics in response to similar problems: evolutionary pressures are consistent with a diverse set of solutions as long as their reproductive fitness is broadly comparable.

By construction, an evolutionary approach underlines adaptation to the conditions prevalent during most of our species’ time: close-knit hunter-gatherers societies, where food was relatively abundant, but its amount uncertain and volatile. This explain some behaviours, such as most people difficulty in self-control with sweet foods, which were adaptive in such an environment, and are maladaptive when food is over-abundant. In other words, it shows how behaviours labelled as biases are indeed rational, and at times optimal in the sense of reproductive fitness – this is the whole point of (Page 2021Page, Lionel. 2021. Optimally Irrational: The Good Reasons We Behave the Way We Do. 1st ed. Elsevier.). Moreover, it flags some biases as artefacts, that is resulting from some situations too recent in our history for the development and selection of efficient heuristics (Haselton et al. 2015Haselton, Martie G., Daniel Nettle, and Paul W. Andrews. 2015. “The Evolution of Cognitive Bias.” In The Handbook of Evolutionary Psychology. John Wiley & Sons, Ltd. https://doi.org/10.1002/9780470939376.ch25.).

Cultural evolutionary theory embeds behaviours one step further, taking into account not only the information transmitted through genes and epigenetics, but also the transmission of social and cultural information through social learning.

Policy-wise, evolutionary theory offers both a welcome explanation and a cautionary tale. It provides grounds for asserting that the behaviours under considerations are not products of some inherent irrationality of the human cognition, but an efficient (i.e. rational) answer to a set of circumstances. This focus on adaptation to context is often central to a good analysis of a policy issue.

Let us take an example lifted from (Colombi 2019Colombi, Denis. 2019. Où va l’argent des pauvres: fantasmes politiques, réalités sociologiques. Payot.): at the beginning of each month, it is common to see in poor neighbourhoods mothers going grocery shopping, and spending a large share of their monthly budget in high-calorie products, such as chocolate spreads, snacks and so on. Demonstrably, weekly shopping of fresh fruits and vegetables would be better for their families’ health, with the same or lower cost. Yet, information campaigns seem to have no impact on them. Common talk paint them as poor financial planners, irrationally attracted to the immediate appeal of junk food, which leads to interventions around financial planning and self-control. Sociologists shown that such interventions are misguided. It sounds like a tautology that the main problem of poverty is a lack of money. Yet, this means that these mother live in an environment where any cash or deposits they have can be taken away at a moment’s notice, because of fees18 The famous high cost of being poor: because they carry a nonpayment risk, poor people typically pay much more for accessing the same or worse services as non-poor people., social security clawback or some family member in urgent need of cash. The products bought by these mothers when they have some money are not only calorie-rich, they are also durable. Whatever else happens, they are sure that their children will have something, perhaps unhealthy, to eat until next month. In other words, they are rationally buying the only kind of insurance they can afford.

Evolutionary theory also cautions against the tendency to fight bias. The rational agent still holds lots of normative clout, and more often then not, the tendency will be to try to suppress what is identified as a bias. If the bias is actually adaptive in the circumstances, as above, such attempts are likely to fail. If the heuristic from which the bias stems has been present for a long time, it will likely be difficult to change in short order. People may change their behaviour when exposed to a change in their environment, but will revert to their default behaviour in the change is removed, hence the difficulty to translate many nudges into durable behaviour change.

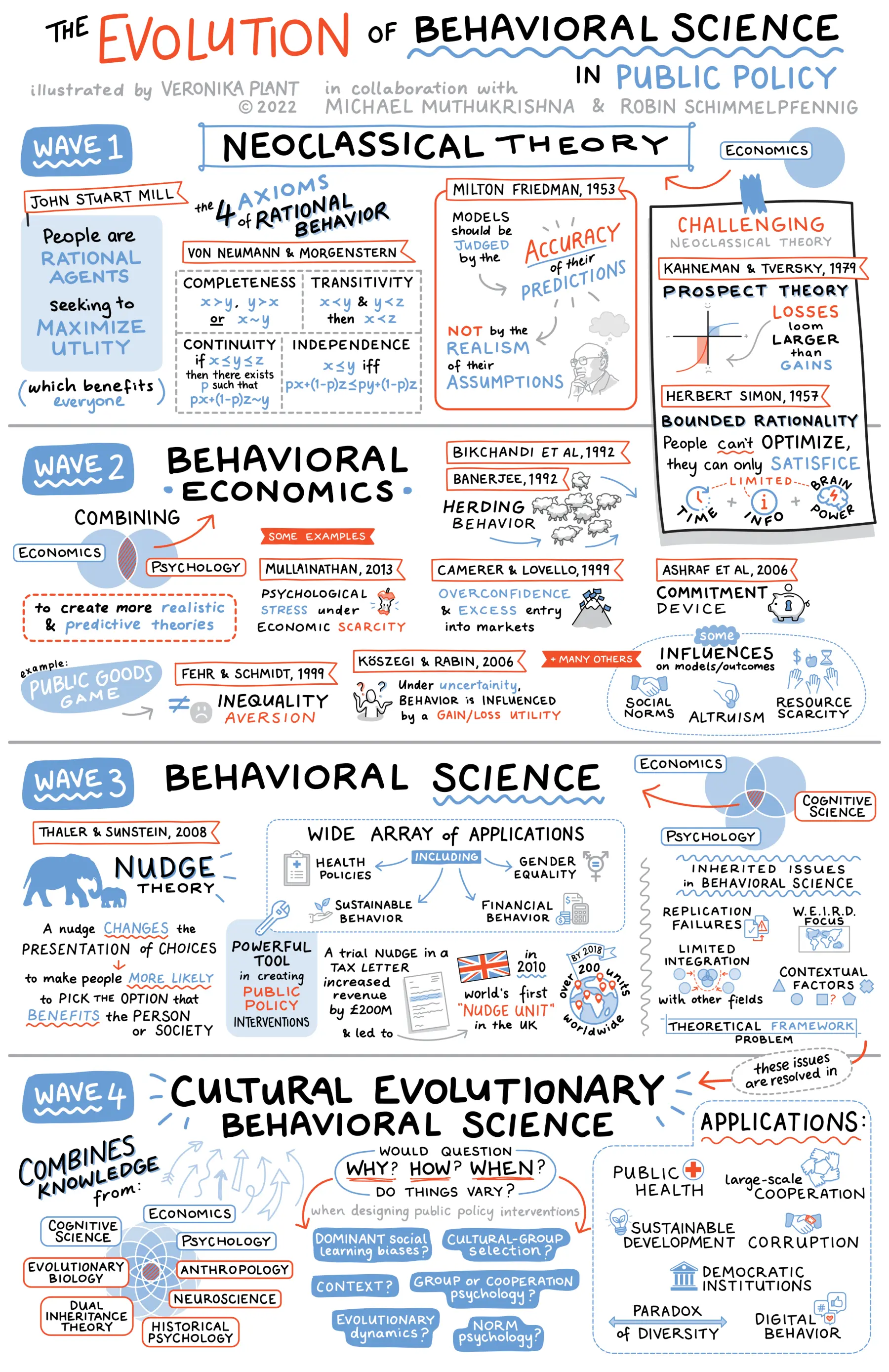

Fundamentally, it implies we should stop designing public policies with a rational agent model, and use behavioural insights to patch the cases where actual behaviour differs, and start designing from scratch policies around actual behaviours. This, of course, is a collective endeavour, requiring input from a diverse set of scientific fields and methods, as underlined in this graphic from (Schimmelpfennig and Muthukrishna 2022Schimmelpfennig, Robin, and Michael Muthukrishna. 2022. Cultural Evolutionary Behavioral Science in Public Policy. Templeton World. https://live-templeton-next-nhemv.appa.pantheon.site//blog/cultural-evolutionary-behavioral-science-public-policy.).

Figure 2.9: The Evolution of Behavioural Science, used with permission of the authors.

2.9 Making things simpler

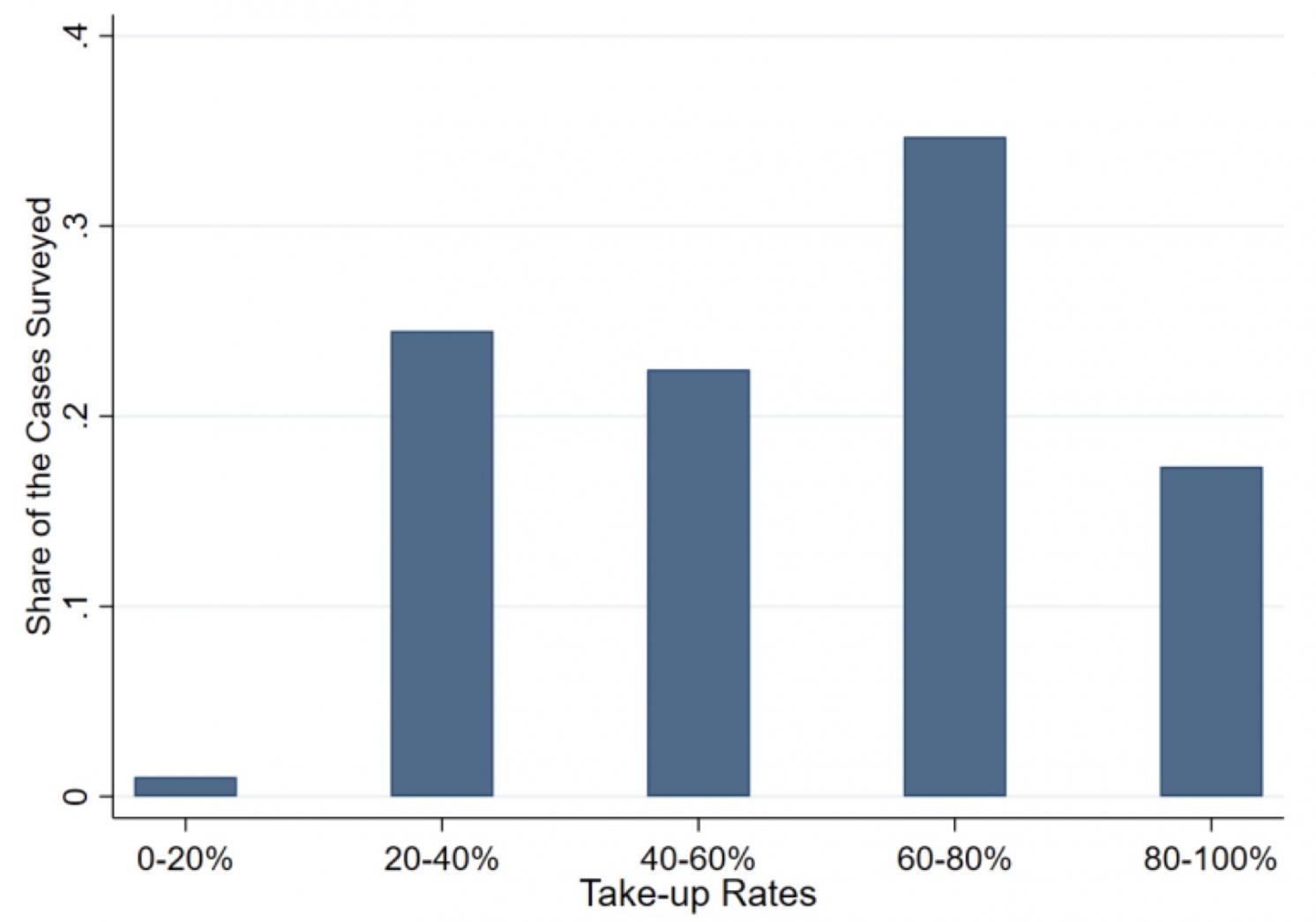

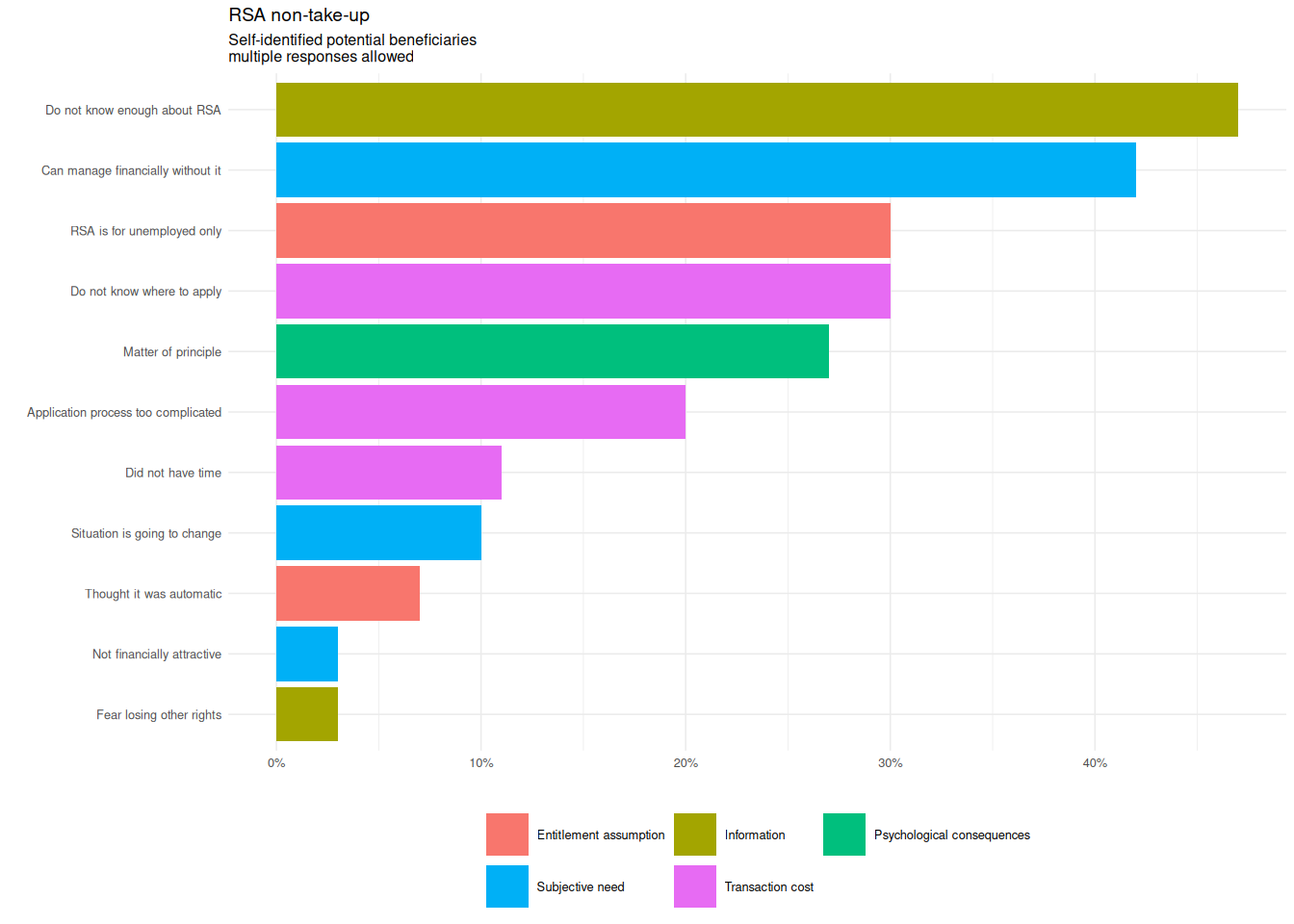

Figure 2.10: Take-up rates.

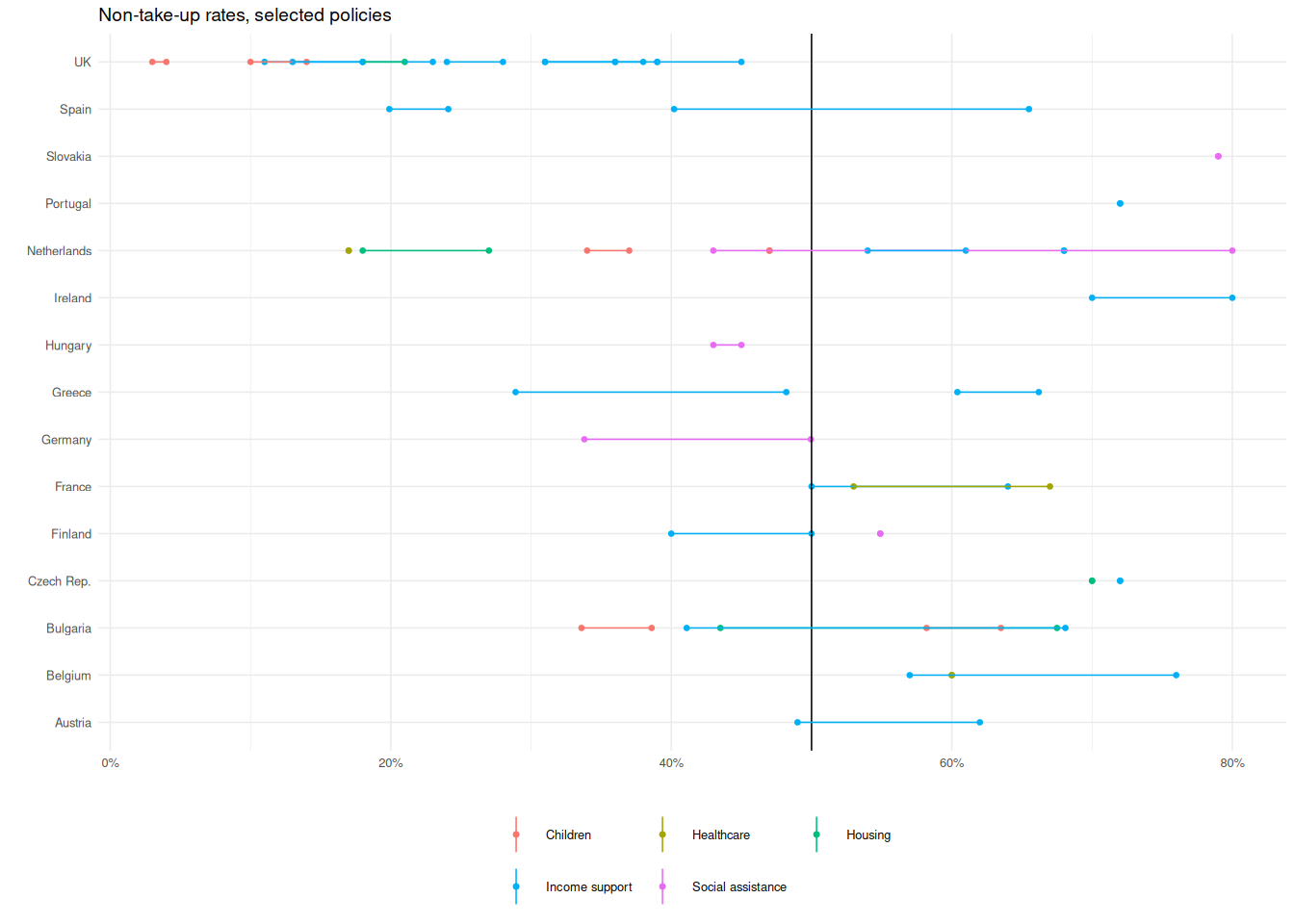

To illustrate this, I’ll focus here on the issue on non-take-up. Across countries, we observe that most social policies struggle to reach at least half the population entitled to them (Ko and Moffitt 2022Ko, Wonsik, and Robert A. Moffitt. 2022. Take-up of Social Benefits. Working Paper No. 30148. Working Paper Series. Preprint. https://doi.org/10.3386/w30148.). Older sources show that this concerns all domains of social policy, including in rich countries (Dubois and Ludwinek 2015Dubois, Hans, and Anna Ludwinek. 2015. Access to Social Benefits: Reducing Non-Take-up. Eurofound, Publications Office of the European Union. https://www.eurofound.europa.eu/publications/report/2015/social-policies/access-to-social-benefits-reducing-non-take-up.). In the figure below, the UK appears as an outsider: the take-up issue has been a priority for some time now – which shows that higher take-up rates are achievable.

Figure 2.11: Take-up rates, OECD countries.

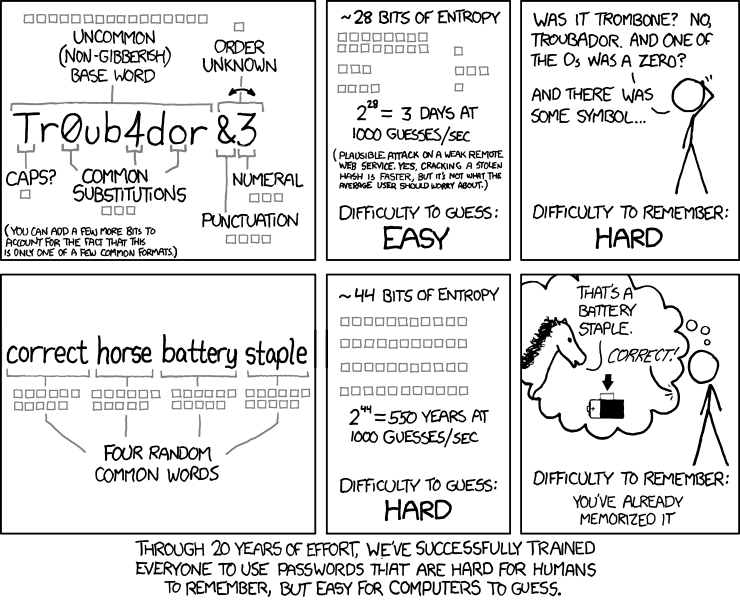

2.9.2 Designing passwords

Let us take a simpler problem: computer passwords. Passwords are a significant elements of our daily security: online impersonation may have long and deep consequences, and even seemingly innocuous data breaches may have significant adverse effects. Hence, how do we design passwords which are (i) secure and (ii) easy to remember. The ease of remembering is the behavioural motive here: a difficult-to-remember password will likely end up being written up somewhere (a Post-It under the keyboard, or directly on the screen), or will have to be reset often, opening avenues to fraudulent resets.

It happens that most password rules currently in place fail on both counts: they encourage the use of passwords hard to remember, and easy to crack through brute-force or rainbow tables, as illustrated by this XKCD strip.

Figure 2.14: R. Munroe, XKCD 936, CC-BY-NC 2.5

Behaviourally speaking, the behavioural argument used in the second row of the strip hinges on pareidolia, the tendency to find a pattern in random elements – here four random words.

In terms of policy, I find this example extremely relevant. According to a report by the Javelin consultancy, identity frauds and scams affected 42 million US consumers in 2022, for a total cost of $52 billion, and a median cost of $1,1, not counting the time and psychological cost of cleaning up things – which can take months or years. Most of the time, these frauds are not linked to massive data breaches21 Such breaches facilitate attacks, by exposing email addresses and phone numbers, and in some cases the hashed versions of the passwords, which reveal users using common passwords.. Aside from impersonation scams, attacks on weak passwords for email and social media accounts are a common tactic to gain access to victims’ sensitive information. So basically, computer security has a large behavioural dimension. So, why are we failing so badly at it?

At the origin, there was a technical choice: at a time where computing power and digital storage were expensive, and most computers tied only to internal networks, having shorter passwords was less expensive. There was at this point a conscious choice to pass the cost to the users, in the form of the effort necessary to remember short but meaningless passwords (and hence the Post-It strategy). Over time, the computation and storage costs became negligible, but there was considerable technical debt: services still rely on old security modules, which limit the size of passwords.

Nowadays, you’ll see many websites using a behavioural patch: when you choose a new password, there is a dynamic list of constraints, who become green as the password you type meet them. This is an example of the end-of-process use of behavioural insights — with limited efficacy if people just store the password in their browser, behind a weak session password. A behaviourally-informed security policy would be to advise people to use much longer passphrases, including through suggesting a set of randomly-drawn words in the user’s language.

It is also an illustration on how applied behavioural insights are not applied in a technical vacuum. To implement this kind of change, you would have to work with web service managers, with often a limited grasp of security, developers and user interface designers.

2.9.3 Communicating efficiently

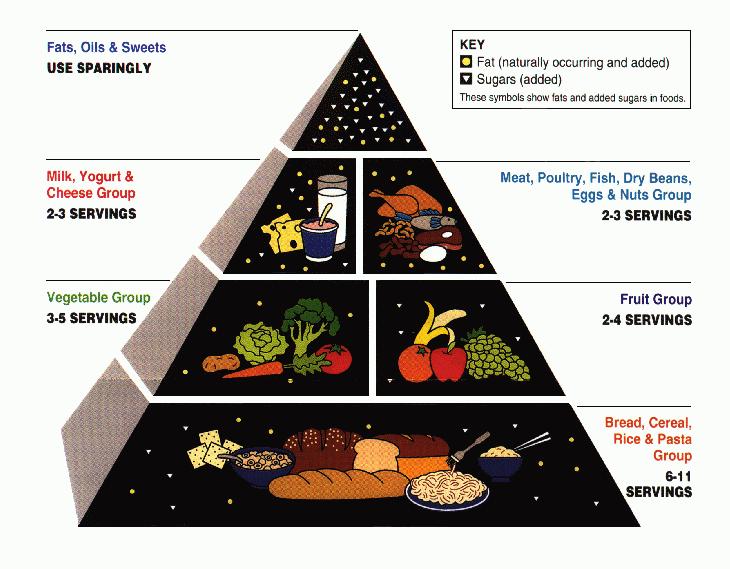

Often, applied behavioural insights are sollicited to help information provision. Typically, a public agency has tried for years to teach something to the population, but the message does not seem to get through. An example is the food pyramid, which summarises what a balanced diet looks like (example below, Wikimedia Commons has a whole category for them).

Figure 2.15: USDA Food Pyramid

This representation is sharply criticized in (Sunstein 2013Sunstein, Cass R. 2013. Simpler: the future of government. Simon & Schuster.).

- To begin with, the shape of the representation is confusing: should the share be proportional to the area devoted to each group, or to the implied volume, which are not proportional to each other? In the words of (Tufte 2001Tufte, Edward. 2001. The Visual Display of Quantitative Infomation. Second edition. Graphics Press.), this is a problem of data integrity, with a lie factor (the ratio between the two) increasing as we go towards the top of the pyramid.

- The food groups are not very straightforward (to the point a key is needed for the op group).

- The less desirable groups are placed on top, a position usually associated with what is more desirable (and chimes with our inborn taste for them).

- The meaning of “servings” is unclear, with no direct connection of our daily eating experience – all the more that the temporal frame is not specified, probably over a week. Implicitly, this targets people in charge of buying food, planning meals and cooking them.

A much better alternative has been produced by the UK Food Standards Agency.

Figure 2.16: UK FSA guide plate graphic.

Compared with the US version, this representation is much closer to our daily experience: it is about what we have in our plates. The size constraint is here used as a feature: instead of a key which magnifies fat and sugar, high-fat and high-sugar elements are more difficult to see22 Actually, you probably had go back to the illustration and look for them when reading this paragraph.. Conversely, more desirable food groups are represented in a diversified, close to the daily experience way. The overall shape is flat, without bogus and confusing 3D effects.

Visually speaking, this is still not optimal. Human perception is inaccurate in measuring angles. Here, is the yellow share larger that the green one or the converse. The blue share seems larger than the pink one, but how much? However, this may be a price worth paying for using the familiar round plate shape.

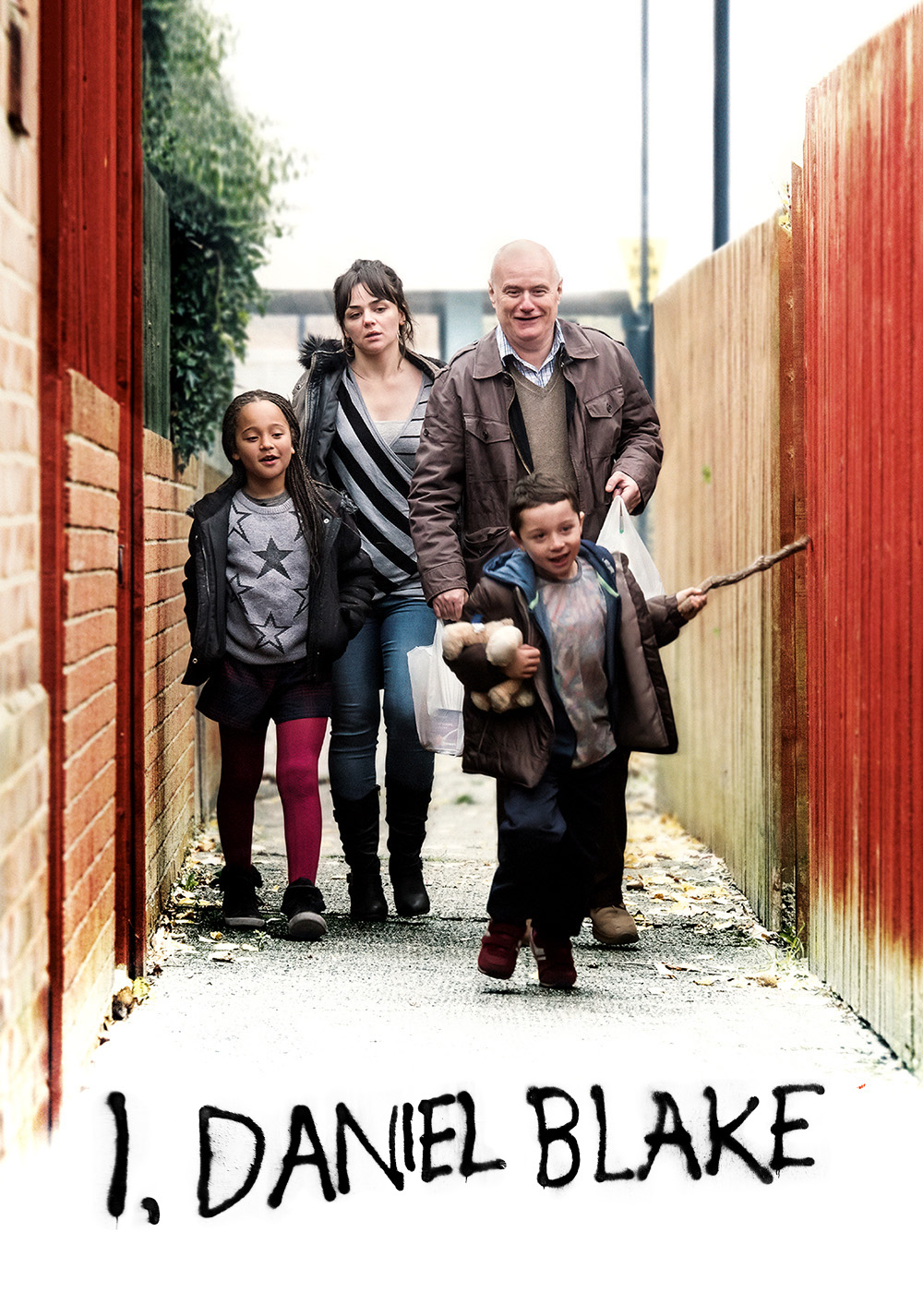

2.9.4 Sludge

Sunstein has suggested the label of Sludge audits (Sunstein 2020Sunstein, Cass R. 2020. “Sludge Audits.” Behavioural Public Policy, January 6, 1–20. https://doi.org/10.1017/bpp.2019.32.) for the practice of going through a process in order to identify and alleviate the behavioural barriers. This approach already underpinned the experiments in higher education access described in (Bettinger et al. 2012Bettinger, Eric P., Bridget Terry Long, Philip Oreopoulos, and Lisa Sanbonmatsu. 2012. “The Role of Application Assistance and Information in College Decisions: Results from the H&R Block Fafsa Experiment.” The Quarterly Journal of Economics 127 (3): 1205–42. https://doi.org/10.1093/qje/qjs017.) and (Dynarski et al. 2018Dynarski, Susan, C. J. Libassi, Katherine Michelmore, and Stephanie Owen. 2018. Closing the Gap: The Effect of a Targeted, Tuition-Free Promise on College Choices of High-Achieving, Low-Income Students. Working Paper No. 25349. Working Paper Series. National Bureau of Economic Research. https://doi.org/10.3386/w25349.), which are the two foundational papers for the practical case. What Sunstein underlines is the need for a systematic analysis of administrative process, with an explicit discussion of the objectives and necessity of each step. To a large extent, it is an illustration of (Hallsworth 2023aHallsworth, Michael. 2023a. A Manifesto for Applying Behavioural Science. The Behavioural Insights Team. https://www.bi.team/publications/a-manifesto-for-applying-behavioral-science/.) fist proposal: use behavioural insights as a lens.

Figure 2.13: Film poster for I, Daniel Blake.

Figure 2.13: Film poster for I, Daniel Blake.